2

International Organizations: Adapters or Learners?

I wish to discover whether those who act on behalf of states believe that international organizations have been used effectively for collective problem solving or whether they ought to be used differently, more effectively. Collective problem solving among 160-odd states of widely different cultural commitments and with divergent historical memories would seem to depend on the ability to transcend cultural and historical boundaries, to establish transcultural and transideological shared meanings. Far from being a one-time event, the sharing of meanings is a continuous activity, partly mediated through the work of international organizations. These entities change the way they attempt to solve problems as their members debate effectiveness; they change by either adapting or learning. In this chapter I explore the difference between the two modes of change. International organizations are "satisficers," not optimizers. Changing them toward greater effectiveness involves the analysts' judgments about the ability of political institutions to act as innovators; this chapter explores that ability. Innovation, adaptation,

and learning, in turn, depend on the knowledge available to those who worry about the effectiveness of international organizations. Therefore, the first task of this chapter must be the exploration of the notion of consensual knowledge.

International Organizations as Problem Solvers

Designing an international organization is a political activity; it does not resemble problem solving in architecture. International organizations are coalitions of coalitions. They are animated by coalitions of states acting out their interests; these international coalitions often are expressions of coalitions of interests at the national level, both bureaucratic and societal. Domestic and international coalitions interact.[1]

Robert Gilpin coined the term "coalition of coalitions" to describe decision making by a state; the application to international organizations is mine. See Gilpin, War and Change in World Politics (Cambridge: Cambridge University Press, 1981), 18-19. Robert Putnam uses the same term to develop a theory of national-cum-international bargaining that is very similar to my application. See his "Diplomacy and Domestic Politics: The Logic of Two-Level Games," International Organization 42 (Summer 1988): 427-60.

Design is a function of how the members of such coalitions think about their interests and values, what compromises they can strike, how they can come to reconsider and revalue their interests. Hence I shall draw on "political" organization theory to develop concepts about adaptation and learning, not on psychological, managerial, or other extant types.[2]Argyris and Schon distinguished "political" organization theory from five other types: organizations as group, agent, structure, system, and culture. Although the types are not mutually exclusive, the authors describe a political emphasis thus:)

Organizations are primarily understandable as interest groups for the control of resources and territory. Organizations are themselves made up of contending parties, and in order to understand the behavior of organizations one must understand the nature of internal and external conflicts, the distribution of power among contending groups, and the processes by which conflicts of powers result in dominance, submission, compromise or stalemate. (Chris Argyris and Donald A. Schon, Organizational Learning: A Theory of Action Perspective [Reading, Mass.: Addison-Wesley, 1978], 328)

Learning, in a political perspective—they argue—can be studied in two ways: first, as a game of strategy, in which the analyst puts himself into the minds of the actors; and, second, as a way by which the actors achieve "collective awareness of the processes of contention in which they are engaged, gaining thereby the possibility of converting contention into cooperation" (ibid., 329).)

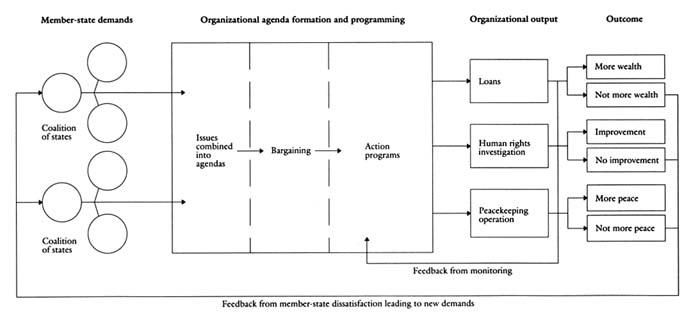

Let us suppose that after an initial period of satisfaction with the performance of an international organization, certain member states have become disillusioned with the ability of the organization to solve problems. I wish to discover whether member-state dissatisfaction has enabled the organizations to learn how to solve problems collectively so as once more to give greater satisfaction. We therefore have to be concerned with a sequence. First come demands formulated by members. Demands are then filtered through an organization. The formulation of a program of action in and by the organization follows. The immediate results of the program take the form of outputs: loans made, human rights violations inspected, desertification projects launched, or a peacekeeping force deployed. Outputs lead to the longer-range consequences of these steps, experienced as satisfaction or dissatisfaction on the part of the members. The core stages are (1) demands, (2) organizational agenda formation, (3) organizational program, (4) organizational output, and (5) experience with the results of the output, or outcome (Figure 1).

Strictly speaking, only the second and third steps in the sequence

Figure 1. The core stages of organizational action

take place in the organization. But obviously it is not possible to conceptualize changes of organizational form unless the consequences of organizational action or inaction as experienced by the members are made part of the analysis: the nature of the feedback from dissatisfaction with outcome to the formulation of a new set of demands is a crucial issue.[3]

My argument throughout this study will be based on how organizations process dissatisfaction; I will not address situations in which all member states are satisfied with outcomes. My reason for this choice is as follows. Organizations entrusted with a certain mission may certainly discharge their tasks in such a way that everybody is generally satisfied. Although this happy state of affairs is extremely rare in the lives of international organizations, very likely the Universal Postal Union and the World Meteorological Organization are entities whose work engenders minimal dissatisfaction. Therefore, they are not under pressure to change their ways—to adapt or to learn. Since my task is to inquire into processes of adaptation and learning (and the absence of dissatisfaction means that the stimulus to adapt or learn is lacking), the experience of such successful organizations is uninteresting to me.

So is the feedback from output to programming because it captures the administrative experiences of the organization's staff when it monitors its own work. If learning takes place at all, it must occur as a result of these feedback processes. Therefore, although our concern is with the shape of the organization as such, we cannot explain changes in shape without paying attention to every step in the sequence. Still, our concern is not with the moral quality of the outcomes. Their character is irrelevant to the exploration. What matters is whether member states, not the observers, are satisfied or unhappy with the outcomes. A final step in the sequence might be an evaluation (moral or not) of whether the international system as a whole, or some regime within it, also changed as a result of the preceding activity. Such an evaluation, however, is the province of the observer passing judgment on history; it is not my concern at this time. True to my commitment to the logical priority of knowledge over interest and power, I begin the exploration of the consequences of dissatisfaction with organizational performance with a discussion of consensual knowledge.Consensual Knowledge

Our concern is this: How does knowledge about nature and society make the trip from lecture halls, think tanks, libraries, and documents to the minds of political actors? How does knowledge, by its nature debatable and debated, become sufficiently accepted to enter the decision-making process? In order to give an account of this process, it is not necessary that we also explain how the relevant information and theories came into existence. We need not and do not deny that many terms and ideas that enter the process remain contentious and contested. Nor do we deny that the political interests experienced by actors are one determinant of which kind of knowledge will be preferred as a basis for decision. I make no claim that consensual knowledge is absolutely different from political ideology; on the contrary,

the line between the two is often barely visible. Some will say that consensual knowledge is merely science-derived transideological and transcultural ideology. I would contest such a claim with only a mild amendment, not challenge it fundamentally, and yet make a case that political choice infused with consensual knowledge is different from, and more pervasive than, choice informed exclusively by immediate calculations of material interest or by the availability of superior power.

By consensual knowledge I mean generally accepted understandings about cause-and-effect linkages about any set of phenomena considered important by society, provided only that the finality of the accepted chain of causation is subject to continuous testing and examination through adversary procedures. Cause-effect chains are derived from information, scientific and nonscientific, available about a given subject and considered authoritative by the interested parties—though the authoritativeness is always temporary. Consensual knowledge is socially constructed and therefore inseparable from the vagaries of human communication. It is not true or perfect or complete knowledge.

We lack a totally consensual criterion for determining truth, perfection, and completeness. It may even be true that what is claimed as consensual knowledge by a bureaucracy is known to be flawed. Yet even this guilty knowledge may be presented to the public as valid merely to protect the mission and the integrity of the organization. In so doing, the nonknowledge interests of the parties concerned are also being protected. Knowledge is not in principle opposed to interest; it is, in the extreme case, the handmaiden of interest.

Consensual knowledge may originate as an ideology. It differs from ideologies only in that it is constantly challenged from within and without and must justify itself by submitting its claims to truth tests considered generally acceptable. Unless such testing takes place, it is impossible to speak of any kind of error correction because the criteria for determining acceptable and undesired outcomes would differ according to the actor concerned. Consensual knowledge differs from ideology-derived interests because it must constantly prove itself against rival formulas claiming to solve problems better. The acceptability, the very quality, of consensus that makes the kind of knowledge of concern to us different from other claims "to know" is the fact that consensus must survive the process of social selection by demonstrating its ability to excel in solving problems.

I hold that such a process describes generally how governments and public organizations have learned to deal with most of the problems their constituents have imposed on them in the twentieth century, with the exception of changed behavior owing entirely to the success of a political revolution, which simply gets rid of competing notions of cause and effect. Our conceptions of what constitutes a problem to be solved by way of public policy have been irretrievably infected by the results of scientific knowledge about nature and society that have gained widespread acceptance.

In what ways can scientific knowledge become a shaper of political decisions? The different ways coexist in time and in space; far from being mutually exclusive, they illustrate four different routes through which scientific information can become politically relevant, even though such information is socially constructed and therefore loses whatever claim to autonomous truth it may have been able to defend.

We can think of knowledge as social epistemology, as a shaper of world views and of notions of causation whereby the intellectual commitments of the seventeenth-century scientists and mathematicians penetrated the way political economists and their disciples in governments began to see the world. That process still continues, even though the informing metaphors today come from cybernetics rather than from mechanics. Science plays a major role in giving us the concepts we use in defining and seeking to solve problems, even though the substantive character of the problem and its human dimension usually are not really elucidated by the scientific metaphor.

Scientific models show up more directly in those fields of public policy in which scientific participation is continuous alongside the work of nonscientific decision makers, such as public health, environmental protection, transportation, telecommunications, and defense production. Here the models used by scientists seriously guide the way problems are defined and solutions devised; economic, political, and legal information that constrains the use of scientific models at the margin, however, also enters the process. Models of this type tend to have universal appeal because decision makers are able to subscribe to many (but not all) of them, irrespective of cultural, religious, and ideological differences. The acceptability of such models does not depend on their being advocated by a hegemonic group or class or nation.

Still other knowledge-infused modes of choice, however, are dependent on extrascientific factors. Theories of macroeconomics, of psychology and criminology, of education, and of social reform can be heavily informed by the results of scientific thinking and research. Nevertheless, the scope of theories is constrained by the fact that a dominant social group, nation, or profession advocates and uses the knowledge in question. Without such "hegemony" it is doubtful that the persuasiveness of the theory would be sufficient to allow us to call it consensual knowledge. Hegemony-aided models of knowledge nevertheless remain subject to the normal truth test.

At a still lower level of abstraction we can identify operational forms of organizing information that result in consensual cause-effect chains, even though this is done without recourse to overarching theory and is heavily influenced by immediate political interests. Newly discovered facts and newly invented models of organizing facts can certainly stimulate the evolution of such operational models. One example is given by the conjunction of information about nuclear energy technology, demand for energy in the context of industrial development, and nuclear proliferation as a military threat. This atheoretical conjunction served to define "the problem" of nuclear proliferation. The field of economic development offers similar examples. Operational models would not exist at all if the main parties did not entertain specific nonscientific objectives. Without political values and interests there would be no incentive to draw on scientific knowledge at all.

Consensual knowledge, then, can be made politically relevant by way of several paths, all available simultaneously in time and space; it is socially constructed and not given by nature; and it is often dependent on political factors for becoming truly consensual. That admitted, we must ask how new cause-effect chains, taking the place of chains accepted at an earlier time but found wanting, can achieve acceptance.

Learning

By "learning" I mean the process by which consensual knowledge is used to specify causal relationships in new ways so that the result affects the content of public policy. Learning in and by an international

organization implies that the organization's members are induced to question earlier beliefs about the appropriateness of ends of action and to think about the selection of new ones, to "revalue" themselves. As this happens, international institutions are being used to cope with problems never before experienced.[4]

I owe this definition of learning to a personal communication from Robert Keohane, who tried valiantly and (I hope) successfully to improve on my earlier definitional attempts. I stress that this definition is intended to be different from the earlier one I offered in chapter 2 of Beyond the Nation-State (Stanford, Calif.: Stanford University Press, 1964).

And as the members of the organization go through the learning process, it is likely that they will arrive at a common understanding of what causes the particular problems of concern. A common understanding of causes is likely to trigger a shared understanding of solutions, and the new chain implies a set of larger meanings about life and nature not previously held in common by the participating members. Put succinctly, learning implies the sharing of larger meanings among those who learn.Learning may involve the elaboration of new cause-effect chains more (or less) elaborate than the ones being questioned and replaced. The resulting conceptualization of the world may be more (or less) holistic than the earlier one. It may imply progress or regress, depending on the normative commitment of the observer or the preferred reading of history. At the moment, the definition of learning I offer is intended to favor no epistemological or ideological preference; I intend to cover any organizational behavior involving self-reflection leading to change. In the final chapter, however, the idea of direction and of progress is reintroduced as I emphasize my own normative stance.

Questioning an established cause-effect schema involves the disaggregation of a problem as it had been initially conceived. The problem first has to be "taken apart"; its parts have to be identified and sorted into patterns different from the ones that had been featured in an earlier round. That done, the problem has to be reaggregated into a different nested set, either more complex and comprehensive than the original one or less so. I develop an example from Karl Deutsch's work to illustrate the process as it involves shifts to greater complexity.[5]

Karl W. Deutsch, The Nerves of Government (New York: Free Press, 1963), chaps. 5, 6. The idea of disaggregating and then reaggregating the parts of a schema underlying public policy is described in Paul Diesing, Reason in Society (Urbana: University of Illinois Press, 1962). A similar idea underlies the empirical investigations of Philip Tetlock in his cognitive studies of foreign policy decision making. See "Integrative Complexity in American and Soviet Foreign Policy Rhetoric," Journal of Personality and Social Psychology 49, no. 6 (1985). My conception of the socially constructed basis of the knowledge that enters policy making comes from Burkhart Holzner and John Marx, Knowledge Application (Boston: Allyn & Bacon, 1979), chap. 4.

We have here a normative element. By what right can one describe learning as tending toward the recognition of a "higher" order of purpose? I imply that a higher order of purpose is also a better purpose, that seeing the world as a more complexly, more tightly coupled system is a more appropriate approach to problem solving in this context. My main justification for this stance comes from my reading of modern history and especially from the growing importance of systemic information in the design of public policy, a justification reserved for chapter 9.

The example from Deutsch involves ascending orders of purposes, beginning with the mechanics of warfare and ending with social transformation. The story begins with an automated antimissile battery programmed to defend the country. The purpose is to achieve immediate satisfaction. In order to make sure that this system works properly, however, a second purpose must be superimposed on it—namely,

self-preservation. In order to make the battery work so as to avoid an unwanted war, it may be necessary to subject it to a higher intelligence, a model of crisis escalation that would tell not only the battery but also the entire country about the steps necessary to avoid accidental war. If this turns out to be too difficult a task, however, a third purpose must be superimposed on self-preservation—namely, the preservation of humankind. Our crisis prevention model would then be subordinated to a more general model aimed at preventing war altogether. But then suppose it is realized that the prevention of all war is not possible without having a more comprehensive understanding of human conflict and cooperation in general. Once that is realized, a fourth purpose is superimposed on the previous three—namely, the preservation of a process. The name of the game now becomes learning all one can about all kinds of links and connections among trends and events that may illuminate human conflict. Learning, in Deutsch's sense, then means the ability to shift from lower to higher orders of purposes. Organizations that subject their causal beliefs to such a process are perpetual learners.

My definition of learning differs from one often encountered in the literature on international organizations. Functionalists make two interconnected arguments about learning.[6]

See Robert E. Riggs and I. Jostein Mykletun, Beyond Functionalism (Minneapolis: University of Minnesota Press; Oslo: Universitetsforlaget, 1979), 166-76, for a full statement of the relationship between functionalist thought and learning.

One could claim that any change in behavior due to one's experience in international organizations constitutes learning, but functionalists reformulate the argument to claim that learning consists of changing one's attitude and behavior as a result of association with a successful functional (i.e., "nonpolitical") organization. Evidence of such learning is the demand that additional functional organizations, designed on the model of the first one, be set up, and that those who participated in the work of these organizations developed positive attitudes toward them.[7]Riggs and Mykletun point out that the "demonstration effect" aspect of learning can be substantiated from historical experience, but that the "positive affect" aspect of learning must be qualified so heavily on the basis of extant empirical studies as to be descriptively almost worthless. Nevertheless, and despite the shortcomings of the functionalist explanations they present, not all aspects of the functionalist argument are shown to be wrong. See ibid., 177ff.

These formulations are less than fully helpful because they do not tell us what has to be learned or how cognitive processes have to be reorganized. Most important, they fail to spell out the institutional and political blocks to the development of positive attitudes; nor do they even ask which forms of organizational design are likely to overcome such blockages. To argue that form follows function and that function follows participation and positive experience flies in the face of experience with disappointment and unintended consequences.Nor do organizations learn as do individuals, even though they are made up of individuals. Institutional routines interfere with learning: lessons learned by one bureaucrat do not necessarily become the collective wisdom of his or her unit. Lessons learned are informed by the interests professed by the learner. We do not assume that the interests will change only because a given routine used in their implementation has failed. Such approaches equate learning with error correction by individuals. But the observer then has to specify what the "correct" perception ought to be, and the "correct" perception inevitably turns out to be the one preferred by the observer.[8]

One author defines individual learning as "changes in intelligence and effectiveness" and the operationalization of the growth of intelligence as "(1) growth of realism, recognizing the different elements and processes actually operating in the world; (2) growth of intellectual integration in which these different elements and processes are integrated with one another in thought; (3) growth of reflective perspective about the conduct of the first two processes, the conception of the problem, and the results which the decision maker desires to achieve" (Lloyd S. Etheridge, Can Governments Learn? [New York: Pergamon, 1985], 66, emphasis in original).

Etheridge's book and his "Government Learning" (in Handbook of Political Behavior, ed. Samuel Long [New York: Plenum, 1981]) are among the first systematic efforts to conceptualize learning by public organizations, even if the lessons learned turn out to be the things the author preferred. The therapeutic component of the theory lies in the emphasis on internal communication, openness, heterodoxy, competition among ideas, personal creativeness, and its rewards. Etheridge explicitly equates government learning with lessons learned by single policy makers. The character of the routines suggested is thought to generalize learning to other decision makers. Abraham Maslow rides high in this approach.

If intelligence is not a useful guidepost for understanding organizational learning, neither is effectiveness. Any judgment regarding the effectiveness of one's performance pertains to technical rationality, not value rationality. Effectiveness is useful in explaining how adaptation occurs, but not how learning as I defined it takes place. Technical criteria for evaluating the performance of international organizations, although no doubt essential for monitoring staff performance, do not speak to the satisfaction or dissatisfaction of the clients, who may value such abstract aims as equity, quality of life, or the enjoyment of individual rights even if the organization falls short of mundane, technically effective performance.[9]

Argyris and Schon recognize as much in their distinction between "single loop" learning (which fits our notion of adaptive behavior) and "double loop" learning (Organizational Learning, chaps. 1, 2). Double-loop learning corresponds to my notion of organizational learning. Argyris and Schon differ from my approach in their emphasis on therapeutic techniques to induce double-loop learning. They identify this kind of innovative behavior with the perfection of internal communications and the relaxation of internal hierarchy—with participation. It requires an openness to criticism and the willingness to forgo stability in favor of unending change. The suggestions for designs that facilitate double-loop learning do not deal with the kinds of demands clients make, or with the outcomes of prior organizational action that have engendered disappointment with respect to values that were to be served.

If notions of affect, imitation, intelligence, effectiveness, and therapy are to be banished from our discussion of learning, who is the redefiner of cause-effect understandings? Who engages in a process that can lead to large shared meanings? Who is supposed to learn?

"An international organization learns" is a shorthand way to say that the actors representing states and members of the secretariat, working together in the organization in the search for solutions to problems on the agenda, have agreed on a new way of conceptualizing the problem. That is, it is not individuals, entire governments, blocs of governments, or entire organizations that learn; it is clusters of bureaucratic units within governments and organizations. That, of course, suggests that there can be varying rates of learning and quite different incentives to learn, depending on context, professional ethos, type of problem, type of region. The unit that learns is a particular kind of collective actor defined by its place in the organization, in world politics, in a professional and knowledge culture.

What of the presumed beneficiaries of the organization's output? Don't they matter? Organizations are supposed to contribute to the happiness and welfare of peasants, refugees, sufferers from communicable diseases, victims of malnourishment, slumdwellers, industrial workers seeking to form trade unions, and users of telecommunications networks. The demands, satisfaction, and dissatisfaction of these people do not matter in my identification of the learner because we cannot be sure whether and to what extent the demands of the potential beneficiaries actually influence the choices made by bureaucratic units. On the whole, the role of the grassroots is very remote in shaping decisions of governments and of international organizations in Third World settings. We can be certain that the disappointment of decision makers influences choice; we can be certain only of the suffering of the presumed beneficiaries, not of their power to be heard.

The stimuli that lead to learning come mostly from the external environment in which the organization is placed, not from inside the organization. As will be shown below, international organizations are hyperdependent on their environments; they can hardly be distinguished from their environments. Therefore, the main impulses that may lead to learning or adaptation are far more likely to come from the environment than from such endogenous concerns as the coordination of units, sources of revenue, staff-line relations, or internal monitoring. My investigation is therefore biased in favor of exogenous sources of change. That admitted, it still makes good sense to worry about improved organizational design because the forces emanating from the environment are far from uniform, and they push in quite different directions. It still makes sense to worry about making internal design more hospitable than it now is to learning impulses.

Can we say something about the environmental conditions most likely to lead to learning? Are there plausible predictors of learning? None is obvious; several are possible. I review them without settling the issue: the desirability of finding new cause-effect chains, the possibility of finding them, and the urgency for finding them.

Desirability refers to the incentives motivating the bureaucratic units to engage in some soul-searching. We hypothesize that actors' career goals and political opportunities to prosper are heavily identified with pleasing a certain constituency, with helping that constituency

to solve its problems. Issues that can be approached with the proper conjunction of incentives on the part of decision makers are more likely to be dealt with than issues that do not offer the same opportunities. From the vantage point of desirability, it makes more sense to reexamine one's ends and values with respect, for example, to fighting epidemics than to mount campaigns in favor of human rights.

The existence of political incentives may not be enough to trigger learning. The possibility of redefining ends along new causal chains must also exist. This possibility, of course, is a function of the state of scientific knowledge, the degree of consensus it enjoys, and the availability of epistemic communities for spreading the word, a point to which we return below. The possibility of learning refers to the availability of new means that entitle actors to consider new ends not previously accessible to them.

One would think that the urgency of the problem involved has something to do with the rate of learning. Is there a crisis that calls out for immediate action, such as a famine, the imminent bankruptcy of a large country and its creditors, or an AIDS epidemic? If the requisite knowledge exists (or can rapidly be found) and if political incentives are aligned with crisis management, we would expect rapid learning to occur. We would also expect that a crisis combined with the special salience of certain issues would increase the urgency. Is health the most salient, or is malnutrition? Is either issue more salient than economic development or debt relief? Are programs and problems involving money for economic development the most salient? It is impossible to say without comparing the learning patterns of the organizations that correspond to these issues. At this point I speculate that learning is triggered in situations showing high desirability, reasonable possibility, and the conjunction of high issue salience and a crisis.

International Organizations as Satisficers

Learners are bureaucratic entities. These are normally studied and described in the idiom of organization theory. But not all aspects of organization theory are germane to our quest. A number of assumptions commonly made by all organization theorists, who derive their ideas from studying business firms and public bureaucracies, must be modified.

One is the notion of organizations seeking autonomy from and control over their environment; another is about the dominance of technically rational decision making.

Environment Dominates Organizations

Standard organization theory assumes that the entity under study seeks a maximum of control over its environment. Organizations are envisaged as systems seeking to get the better over external elements and actors that might reduce the entity's autonomy. Boundary maintenance is therefore crucial, whether the environment is envisaged as being made up of customers, suppliers, competitors, political clients, or other bureaucracies. Although it is understood that the organization must satisfy those environmental forces on which the organization depends, maximum attainable control over them is seen as the best way to achieve autonomy. Autonomy, in turn, is valued because it guarantees the survival of the organization in a competitive setting.

The core concept in the struggle for survival is the idea of adaptation. Adaptation implies that the organization must consistently review its operations in order to make sure that boundaries are maintained in such a fashion as to favor survival. Review implies that past errors in decision making have been identified and corrected. To adapt, then, means to so alter operations in the face of a changing environment as to be more certain of surviving and prospering. If the prevalence of competition among organizations is the challenge to survival, then principles of wise management are the techniques to assure that natural selection favors you rather than your competitor. Successful adaptation implies using the techniques of management and design found to be theoretically and practically appropriate.

Wise administration implies rational decision making. The kind of decision theorists have in mind here is the type Max Weber called "technically rational"—an "efficiency-seeking" decision. The overall purpose of the decision is to improve whatever the organization's main mission is: to make a profit in producing refrigerators, to provide software services, or to grow soybeans. In the case of public organizations the mission may be helping the handicapped, improving agricultural productivity in Mali, or perfecting a defense against missile

attack. The purpose is not questioned; the means for achieving it are constantly reviewed. It bears repeating that the exercise of technical rationality presumes an agreed, known, and stable ordering of preferences among the decision makers.

None of these assumptions is consistently met by international organizations. They normally do not compete for market shares, profits, or potential clienteles. Although their survival is not assured at all, failure to survive is not due to being a poor competitor. They strive to survive, of course, but they do so by seeking to please their clients with more appropriate programs. The point is that these programs do not result from the exclusive exercise of technical rationality. Conflict among coalitions precludes the existence of agreed and stable preference orderings. For international organizations survival implies more than simple adaptation because it may involve the questioning of underlying goals of action; then criteria of efficiency no longer suffice.[10]

Therefore, I cannot take advantage of the ingenious typology proposed by Lawrence G. Hrebiniak and William F. Joyce in "Organizational Adaptation: Strategic Choice and Environmental Determinism," Administrative Science Quarterly 30 (September 1985): 336-49. Hrebiniak and Joyce argue against the mutual exclusivity of environmental and volitional determinism in explaining organizational adaptation by proposing a reciprocal-feedback model in which both operate, albeit in uneven proportions. The proportions determine such things as innovation, search, questioning of ends, and degree of internal conflict. Since the proportions can change as a result of these activities, organizations can move from one type to another and not only adapt but also change themselves more basically. Although this view is akin to mine, it still requires a sharper differentiation between organization and environment than the international setting permits.

That questioning of goals, in turn, arises because boundary maintenance cannot be applied to international organizations. There is no hard and fast distinction between environment and organization. The clients are at the same time the masters and the paymasters. The staff and management serve at the pleasure of the clients. Suppliers are often the dominant coalition. Consumers can vote the management out of office. International organizations exist only because of demands emanating from the environment and survive only because they manage to please the forces there, which also dictate the programs these organizations must adopt to survive.

Bounded Rationality Dominates Decision Making

Consensual knowledge is certainly not an essential ingredient in the introduction of innovations in policy or institutional design. The kind of knowledge that is the property of actors who do not subject their beliefs to systematic verification tests—ideology, in short—undoubtedly is often a sufficient explanation for change. Some guidance from an ideology is required even for minor changes of the means of action. Programmatic innovation that dispenses with consensual knowledge is common. It is perfectly consistent with adaptive behavior, nationally and internationally. As such, it will concern us later in our exploration.

Innovation due to the emergence of consensual knowledge entails a

major questioning of prior cause-effect schemata.[11]

One variant of innovation results from a revolutionary upheaval; another is based on a major cognitive development in how adherents of clashing ideologies come to see the world. When a revolution sweeps away a rival view of the world and the revolutionaries succeed in imposing their unified view on the rest of the population, a new kind of knowledge certainly inspires ensuing innovations. But it is not the kind of knowledge that emerges from a confrontation and testing of rival explanations. Since political revolutions do not occur in international politics, this variant is not relevant to my enterprise.

Few such cognitive breakthroughs are on record in the histories of international organizations. The field of public health perhaps comes closest. Even the Keynesian consensus that inspired twenty years of international trade and monetary programs came to an end. Most programmatic innovations feature an unstable mixture of slowly changing political interests (rooted in values and ideologies) and bits and pieces of consensual knowledge. That knowledge is specific to professional disciplines and to discrete issues on the political agenda such as the creation of wealth and its diffusion, the interplay of wealth creation and the diffusion of technology, the link between food production and environmental protection, and the protection of population groups thought to be especially vulnerable. Consensual knowledge is not total wisdom to guide the world to eternal bliss.The questioning of prior cause-effect beliefs, in short, is not a "rational" process if judged by strict standards of rational choice. Matters proceed in a much sloppier way. Thought about environmental protection and resource conservation exemplifies the sloppiness. Here, a change in the urgency for choice made politicians seek out bodies of knowledge thought likely to advance their instrumental cause. The creators of that knowledge may also seek out friendly politicians as likely allies. Before the claims to knowledge become truly consensual, the interplay will take the form of an ideological debate, as happened in the early days of the international environmental movement when the interests of the developed and the developing worlds were at loggerheads. Because of the phenomenon of "embedded liberalism," Keynesian macroeconomics provided the consensual knowledge of the Bretton Woods system even though, ten years earlier, that same body of thought still had the trappings of an ideology in the United States.[12]

John Gerard Ruggie, "International Regimes, Transactions and Change," International Organization 36 (Spring 1982): 379-416.

Since I am unable to predict when the interplay of interest and knowledge becomes stable, I can claim only that learning has an elective affinity with the more fundamental changes in scientific and technical understanding, though not in the form of rational choice Weber termed "technical" and Herbert Simon calls "substantive.""If we accept values as given and consistent," says Simon,

if we postulate an objective description of the world as it really is, and if we assume that the decisionmaker's computational powers are unlimited, then two important consequences follow. First, we do not need

to distinguish between the real world and the decisionmaker's perception of it; he or she perceives the world as it really is. Second, we can predict the choices that will be made by a rational decisionmaker entirely from our knowledge of the real world and without a knowledge of the decisionmaker's perceptions or modes of calculation. (We do, of course, have to know his or her utility function.)[13]

Herbert A. Simon, "Rationality in Psychology and Economics," in Rational Choice, ed. Robin M. Hogarth and Melvin W. Reder (Chicago: University of Chicago Press, 1986), 26-27. Also see Simon's Reason in Human Affairs (Stanford, Calif.: Stanford University Press, 1983), chap. 2, and "Human Nature in Politics," American Political Science Review 79 (June 1985) 293-304. Learning, then, consists of recognizing a different process of decision making, not the realization of specific values. The values will differ with the issue or problem in question and with the coalition undertaking the revaluation. They cannot be prespecified in principle. Can this conception of learning take hold in real organizations without overcoming the forces that make for motivated errors in decision making? We will consider this question below.

This situation represents substantive rationality. It is not encountered in the world of international organizations.

International organizations choose "under ambiguity." Decision making "under ambiguity" differs fundamentally from what is normally considered to be rational behavior by individuals seeking to optimize or maximize. Problems of ambiguity arise under the following conditions: Determined or even probable outcomes are rarely associated with a decision-making routine. Choice is often constrained by a condition of strategic interdependence in which the opposing choosers find themselves, a condition of which they are fully aware. Preferences are often not clear, or not clearly ordered, because the decision is not being made by a single individual, but by a bureaucratic entity. There may also be a mismatch between the assumed causal links constituting the problem the organization is called upon to resolve and the causal theory underlying the internal arrangements of units, plans, and programs designed to solve the problem. Decision-making models that are supposed to draw on the lessons of history, that are predicated on the assumption that actors deliberately learn from prior mistakes, are badly flawed because the lessons of history are rarely unambiguous: different actors certainly offer varying and equally plausible interpretations of past events that often mar decision making in the present. Learning, under such circumstances, consists of recognizing the desirability of a different process of decision making, a process that copes a little "better" with ambiguity. Learning explicitly avoids specifying what, substantively speaking, ought to be learned. Under procedural rationality, learning means designing and mastering an alternative process.[14]

James G. March, Decisions and Organizations (London: Basil Blackwell, 1988), 12-14. March mentions a final ambiguity—"decision-making is a highly contextual, sacred activity, surrounded by myth and ritual, and as much concerned with the interpretive order as with the specifics of particular choices" (ibid., 14)—which strikes me as being more applicable to the study of the World Council of Churches than to the United Nations and its agencies.

It follows that, given these constraints on substantive rationality, a decision cannot be rational normatively as well as descriptively. Decision making designed to seek optimal outcomes is normatively rational; any account of why such decisions fail to attain optimum outcomes is

both descriptive of a process and evaluative about its consequence. Decision making conceded to be less than substantively rational can only be described accurately, not evaluated normatively. Decisions made "under ambiguity" remain rational because the choosers do the best they can under the circumstances. They do not act randomly. They attempt to think about trade-offs even though they are unable to rank-order their preferences. In short, they "satisfice." Nothing that is capable of being described systematically is truly irrational even if the rational content cannot be stated normatively.[15]

Amos Tversky and Daniel Kahneman suggest that decisions constrained by all of the unmotivated cognitive errors to which people are subject are still rational according to the canons of bounded rationality. See their "Rational Choice and the Framing of Decisions," in Rational Choice, 67-94.

Hence the notion of "error" and "error correction" becomes problematic.Learning Adaptation in "Error Correction"

Adaptation and learning, in the literature on biological and cultural evolution, are synonyms. Both are tied up with survival and stability. Neither is a serviceable concept for me, as derived from Neo-Darwinian thinking, because both depend on the idea of homeostasis. Since few international organizations possess this property, we must first show how the notions of adaptation and learning differ in this context from the more familiar usage in biology and anthropology. In cybernetic-biological discourse the organism learns in order to adapt. What does it learn? It develops behaviors (which are often not based on genetic endowment) that enable it to survive under changing environmental conditions. It does so by keeping its main bodily functions within a physiologically favorable range: the organism's functions are stable if changes remain within a range that permits it to survive. Survival and stability are linked concepts; stability makes survival possible. What is learned is to compensate behaviorally for some challenge to stability. This involves short- and long-term feedback mechanisms of varying complexities. Conceptually, then, stability and survival are brought about through adaptive behavior, which is always behavior that leads to an improvement on the organism's life chances. Adaptation—"learned behavior" in the biologist's language—is always for the better.[16]

I am indebted for this conceptualization to W. Ross Ashby, Design for a Brain, 2d ed. (New York: John Wiley, 1960), esp. chaps. 1, 5, and 9. My treatment of adaptation eschews an argument using evolutionary theory either as metaphor or as analogy. I find no equivalent to natural selection, variation, differentiation, and niche seeking based on automatic processes in the behavior of international organizations. If anything, that behavior is to be associated with the deliberate creation of new niches, as described by Herbert A. Simon in Reason in Human Affairs (Stanford, Calif.: Stanford University Press, 1983), chap. 2. The only concept that suggests an analogy with biological evolution is the core idea of survival, without also implying the related idea of fitness.

Donald T. Campbell, however, considers this approach to be quite consistent with his view of evolutionary dynamics. Campbell demonstrates that behaviors that are less than rational by microeconomic criteria are more adaptive in evolutionary terms than behaviors that meet the canons of substantive rationality. Biological adaptation is, for Campbell, what I call learning, and it implies the development of traits such as reciprocity and fairness that, in turn, make survival more likely. See his "Rationality and Utility from the Standpoint of Evolutionary Biology," in Rational Choice, 171-80.

Whether adaptation is always for the better depends on who assesses the outcome. Adaptation, in our context, is the ability to change one's behavior so as to meet challenges in the form of new demands

without having to revaluate one's entire program and the reasoning on which that program depends for its legitimacy. This, of course, assumes that the challenges come slowly and can be dealt with in a piecemeal fashion. Adaptation is incremental adjustment, muddling through. It relies largely on technical rationality. Because ultimate ends are not questioned, the change in behavior takes the form of a search for more adequate means to meet the new demands.

This is no mean feat. Organizations are usually bombarded with divergent demands from a variety of coalitions, and their survival is by no means a certainty. Being able to adapt without basic revaluation is a considerable achievement for the organization's leaders and members. It is a very worthwhile enterprise to try to understand how mere survival is made possible given the world setting. To be able to adapt is to be very skillful in living with one another in a conflict-ridden world.

It is common to equate adaptation (and learning) with trial-and-error processes of changing one's behavior, with relatively un-self-conscious experimentation, again suggesting a misleading parallel to natural selection. I argue that the identification of both adaptation and learning with simple error correction is inappropriate in the study of international organizational behavior.

Error occurs when decision makers take the kinds of shortcuts described by cognitive psychologists: errors of bias, judgment, and attribution. Such errors certainly violate the canons of technically rational choice. A different kind of error arises in prisoners'-dilemma situations. The "less than fully rational" result of a "rational" choice is caused by the situation constraining the choosers. In both cases the decision makers could, in principle, avoid their mistakes if the institutional constraints and incentives conditioning the choice had been different, or if the source of their errors had been pointed out to them.

Why are these flaws in decision making relevant for us? The prevalence of cognitive shortcomings is undeniable; but they refer to individual decision makers, not bureaucratic entities. If entire units were often characterized by such traits, it is doubtful that they would survive for long. If they do show these attributes and survive anyway, the cognitive shortcomings cannot be very debilitating. Adaptation can still occur. In short, cognitive errors are relevant only if the error-prone

chooser is "really crazy" and if the psychological mechanism underlying it is "hard-wired."

Errors associated with strategic interdependence are the stuff of microeconomic decision theory. Again, some mechanism for adapting to such constraints must be available because the casualty rate among international organizations is very low. No learning or adaptation at all would be in evidence if such errors were normal. However formidable they look in logic, their practical impact cannot be very great.

But what are we to make of such "errors" as an unwanted war, or an unintended arms race, or the continuation of an ineffective policy (such as area bombing after its shortcomings had been documented at the end of World War II)? Why do these "motivated" errors recur? They recur, we are told, because actors use unsystematic methods of analysis resulting in the nonuse of available knowledge. They recur also because of bureaucratic rivalries. Institutional missions become encapsulated in routines that aid the career patterns of officials rather than solve problems. Operational codes are enshrined even though they militate against the calculation of trade-offs.

Such behaviors are rooted in the routines of collective decision making. Institutionalized conduct and expectations, triggered by such things as civil service rules and bureaucratically sanctioned codes of interpersonal ties and loyalties, are the culprits. These, to be sure, may be aggravated by cognitive errors committed by individual decision makers.

Motivated errors are due to ailments common to all organizations. But they are not necessarily irrational. Since these organizational ailments are part of a larger culture that has adapted, and since they occur in organizations that have survived, they cannot be obviously self-destructive. It seems that there must be good organizational reasons for the persistence of these practices even though they do lead to unwanted consequences. Motivated errors are part of normal organizational life. We should therefore not treat them as a simple mismatch of ends and means that can be corrected by appealing to the canons of technical rationality. But can the persistence of motivated error be considered allowable in an organization that is expected to adapt or to learn?

Adaptation, Learning, and Institutional Constraints

If the notion of error means anything, it must mean that actors recognize as wrong the organization's persistence in making decisions that produce outcomes not desired by the members. Successful adaptation implies the willingness to reconsider the tie between means and ends, and to reformulate the organization's program accordingly. Successful adaptation may also call for adding new purposes or dropping old ones, without also involving a searching examination of assumptions about cause-effect links. Both activities also imply error correction.

But I argued that the persistence of motivated errors not only is natural but also may actually contribute to the continued functioning of the organizations; how, then, can I argue that adaptation includes the correction of some motivated errors? The answer is that small, incremental institutional change is required by adaptive behavior even though sudden and drastic reform is not to be expected. Civil service rules may be relaxed. New interdepartmental committees may weaken the force of tenacious bureaucratic politics. Information gathering and monitoring may be expanded by means of new routines. All these constitute marginal changes in practices that may have led to motivated errors in the past; they do not add up to a complete self-examination. But they are adaptive in the sense that recognized flaws in decision making are removed. Adaptation is change that seeks to perfect the matching of ends and means without questioning the theory of causation defining the organization's task. Adaptation does not require new consensual knowledge.

I reserve the term learning for the situations in which an organization is induced to question the basic beliefs underlying the selection of ends. True revaluation is attempted when beliefs of cause and effect are examined. Revaluation involves the recognition of connections among factors thought to constitute causes of whatever problem is to be solved, connections that had previously gone unrecognized. Revaluation implies shifting one's cognitive horizon toward beliefs about causes that are different from previous beliefs. Revaluation is made possible by the existence of bodies of knowledge not previously available. Learning involves the penetration of political objectives and programs by new knowledge-mediated understandings of connections.

Once the membership of an organization questions older beliefs and struggles to institutionalize new ways of linking knowledge to the task the entity is supposed to carry out, it must necessarily also question behaviors identified with past failures. These behaviors may well have been rooted in practices we identify as "motivated errors." Although some of these practices undoubtedly contributed to the past survival and adaptation of the organization that is now questioning itself, some other practices (such as the mode of recruiting personnel and the kind of professional training required of personnel) will now appear to be wholly indefensible. The persistence of practices recognized as motivated errors is incompatible with learning: overcoming them is a core aspect of learning.

Innovation based on the unstable interplay of consensual knowledge and interest can be of either the adaptive or the learning variety. Organizational programs may be changed in response to bits and pieces of information that are accepted as true and relevant by everybody without also evoking the need for a major soul-searching about the adequacy of the organization's basic thought patterns. The World Health Organization (WHO) could alter its family-planning programs in response to the introduction of new contraceptives without having to question the program itself; the International Monetary Fund's (IMF's) creation of various temporary compensatory-financing facilities did not call into question its overall thinking about balance-of-payments equilibrium. In short, adaptive behavior also makes use of such relatively uncontroversial knowledge as becomes available.

Adaptive behavior is common, whereas true learning is rare. The very nature of institutions is such that the dice are loaded in favor of the less demanding behavior associated with adaptation. This dictum is as applicable to domestic bureaucracies as it is to international ones. I shall now review several treatments of constraints on organizational learning and adaptation that draw on domestic experience alone, on experiences in which only one set of coalitions needs to be considered. We must remember that collective behavior change in international organizations involves a dual set of coalitions—international bargaining blocs made up of coalitions of domestic groups. If true learning is rare domestically, we ought not to expect too much at the international level.

The case for the difficulty of learning is made starkly by John Steinbruner. He tells us, again, that true analytic decision making is rarely practiced, that the recurrence of misperceptions must be taken for granted. That leaves us with the choice patterns Steinbruner calls "cybernetic" and "cognitive."[17]

John D. Steinbruner, The Cybernetic Theory of Decision (Princeton, N.J.: Princeton University Press, 1974). His unit of analysis is the bureaucratic unit in the foreign policy apparatus of the U.S. government. Although Steinbruner uses the categories found useful in the study of individual learning, he projects these to collective learning among potential domestic coalition members who will eventually have to face a foreign coalition. It is not always clear whether cognitive decision making is a variant of cybernetic choice or a separate type. For a clear demonstration that they ought to be considered separate types, with the cognitive type more likely to lead to learning than the cybernetic, see Robert Cutler, "The Cybernetic Theory Reconsidered," Michigan Journal of Political Science 1 (Fall 1981): 57-63. Expressed in terms of individual choice, analytic decision making features "theoretical thinkers," cognitive choice "uncommitted thinkers," and cybernetic choice "grooved thinking." Stein and Tanter put these categories to good use in their study of Israeli decision making during crisis, but they postulate that the three types are not mutually exclusive and that they can be combined to produce six different "paths to choice," thereby allowing gradations of changed behavior that contain elements of analytic thinking. See Janice G. Stein and Raymond Tanter, Rational Decision-Making (Columbus: Ohio State University Press, 1980), chap. 3. For a slightly different but congruent way of explaining decision making and error commitment, see James G. March and Johan P. Olsen, "Organizational Learning and the Ambiguity of the Past," in Ambiguity and Choice in Organizations (Oslo, Norway: Universitetsforlaget, 1976), chap. 4.

In both choice patterns, decision makers are motivated by wishing to "survive," rather than to serve some overriding organizational mission. Routines through which choices are made seek to limit the complexity of the real world and to reduce uncertainty by imposing limits on incoming information and by seeking to segment the problem to be solved. Outcomes are not systematically assessed, and the choice of response is limited by these constraints. Both modes result in suboptimal outputs, though they generally suffice to assure the survival of the unit. Both decision-making modes are capable of not using new knowledge or of using it very selectively; neither is able to make full use of consensual knowledge.The chief lesson students of choice within institutions offer us is that we cannot predict events on the basis of a known distribution of preferences and capabilities among the actors. Something intervenes between these attributes and the outcomes of interest. Something goes on that constrains behavior so that neither the rationality of the market nor the power of pure analytic thought suffices to give us the explanations we seek. The routines and habits associated with the kinds of behaviors delineated by Steinbruner have independent effects. They lead to such oddities in organizational life as "garbage cans" and "martingale processes"—patterns that cannot be explained except in terms of institutionally mediated historical experiences and memories.[18]

This line of analysis is opened up in James G. March and Johan P. Olsen, "The New Institutionalism," American Political Science Review 78 (September 1984): esp. 742-47. Independent institutional factors are explored in Robert O. Keohane, After Hegemony (Princeton, N.J.: Princeton University Press, 1984), chaps. 7, 11. For an application of a similar logic to the analysis of innovations in economic life, see Paul A. David, "Clio and the economics of QWERTY," American Economic Review 75 (May 1985): 332-37.

An emphasis on independent institutional factors in no sense minimizes the continued importance of social-exchange theory in explaining how, given the constraints mentioned, actors nevertheless manage to make agreements that result in behavioral innovation. High among the insights of social-exchange theory is the norm of reciprocity. See Robert O. Keohane, "Reciprocity in International Relations," International Organization 40 (Winter 1986): 1-27.

What intervenes has a logic of its own, which, given the limits of bounded rationality from which all organizations suffer, is as rational as can be expected. Institutional constraints actually favor adaptive behavior most of the time, even though they do not make us learn by leaps and bounds to improve the outcomes produced.Different types of institutional constraints, however, may be able to predict something about the triumph of consensual knowledge. I offer the contrast between the American and European methods of making environmental regulations as an example. In general, the attempt to devise regulations to improve the quality of the environment is more controversial in the United States than it is in Western Europe. In the United States, expert witness is pitted against expert witness, study

against study, court decision against agency regulation. Each political interest seems able to create its own claim to knowledge and to make these claims penetrate the political process. Yet despite the palpable absence of consensual knowledge in the short run, these controversies often disappear eventually as better knowledge coalesces with dominant interests to produce comprehensive regulations that take into account economic consequences as well as immediate dangers to environmental quality.

In Western Europe, by contrast, the regulatory process tends to be much more sedate because the interested parties and their experts negotiate the regulations in relative privacy. The price of the European process—which superficially appears more consensual than its American counterpart—is a less sweeping set of regulations that is more respectful of the economic demands of the groups concerned—industry and labor. West European consumers suffer more than their American counterparts. In our terms, then, the learning—i.e., the recognition of a differently coupled system and the need to manage it—is more modest in Europe, though more peaceful. Pluralistic institutions predict a different course of learning than do neocorporatist ones. Both constrain learning while offering fora in which it can take place.[19]

For instances of a lack of consensual knowledge in regulatory politics, see Dorothy Nelkin, ed., Controversy (Beverly Hills: Sage, 1979). The contrast between the European and the American pattern of regulation is explored in Ronald Brickman, Sheila Jasonoff, and Thomas Ilgen, Controlling Chemicals (Ithaca: Cornell University Press, 1985). The American pattern features autonomous experts who are partisans interested in long-range effects, willing to regulate intrusively, and eager to use risk-assessment techniques of analysis. The European experts tend to be committed to cost-benefit analysis, attached to interest groups and government, and preoccupied with the short run; they prefer less rather than more regulation.

I argue that institutions constrain and facilitate learning. But which predominates? In order to make a claim that international organizations need not confine themselves to adaptive behavior, I must also make a plausible argument that the cognitive-cybernetic version of constraint on innovation need not always prevail.[20]

Such alternatives also include learning patterns owing nothing to consensual knowledge. Examples are the tit-for-tat process demonstrated in Robert Axelrod, The Evolution of Cooperation (New York: Basic Books, 1984), and the GRIT process documented in Deborah Welch Larson, "Crisis Prevention and the Austrian State Treaty," International Organization 41 (Winter 1987): 27-60.

I admit that political actors do not automatically make use of knowledge, consensual or otherwise. I concede that the acceptance of novel cause-and-effect schemata derived from scientific knowledge is not common. I know that history has no purpose, that we are not programmed to progress, or to evolve, toward some future state of bliss based on the general recognition of new causal chains. Nevertheless, we also know that there is such a thing as the norm of reciprocity, that even antagonistic parties can bargain so as to make that norm a continuing reality. And we know of a sufficient number of bargains that resulted in both adaptive and learning behavior to be convinced that habitual and routinized institutional behavior is not the inevitable victor.How can we clarify this difficult relationship between the conservative and the innovative aspects of institutions? I shall argue, in the

remainder of this chapter, that the organizations of specialists we call epistemic communities are the most significant agents of institutional innovation. I shall also argue, however, that even epistemic communities are hemmed in by many constraints. It is a mistake, nevertheless, to think of the white knights of expertise as being arrayed against the forces of darkness, the bureaucrats and politicians committed to grooved and uncommitted thinking. I introduce the notion of habit-driven behavior to describe the continuum of institutional conduct that ranges from resistance to any innovation, to adaptation, and eventually to learning. Habit-dominated institutional behavior is usually adaptive; only habit-defying behavior, however, can be considered consistent with learning. To be able to learn means taking advantage of the most permissive, the sloppiest, side of habit. Finally, since learning clearly depends on our ability to share meanings across cultural and ideological chasms, I discuss the way in which transcultural communication must be envisaged.

Epistemic Communities as Enemies of Habit-Driven Institutions

All international organizations are staffed by professional civil servants who participate in the making of decisions and who implement most of the operational measures. International secretariats are made up of people who usually carry the professional qualifications relating to their organizations' tasks: law, agriculture, medicine, and the like. They are a conduit for introducing into public policy the knowledge produced by their disciplines. Moreover, these professionals often—always in the case of U.N. specialized agencies—act in concert with like-minded professionals not in the employ of the organizations, but linked to them through nongovernmental organizations having consultative status, or by way of service on advisory panels of experts. International organizations are exposed to knowledge through the medium of epistemic communities, defined by Holzner and Marx as "those knowledge-oriented work communities in which cultural standards and social arrangements interpenetrate around a primary commitment to epistemic criteria in knowledge production and application."[21]

The ideas contained in this section have been developed largely by Peter M. Haas; see his Saving the Mediterranean (New York: Columbia University Press, 1990). The definition of "epistemic communities" is taken from Burkhart Holzner and John H. Marx, Knowledge Application (Boston: Allyn & Bacon, 1979), 108. Note that this definition differs sharply from Michel Foucault's, who apparently invented the term in The Order of Things (New York: Vintage Books, 1973). I find Foucault's usage indistinguishable from what we might call "ideological communities."

In refining his notion of "paradigm" in the second edition of his Structure of Scientific Revolutions (Chicago: University of Chicago Press, 1970), Thomas Kuhn also offers a specification of the "community," which is the unit of analysis of believers in a paradigm. His description on pp. 176-78 fits my notion. However, his specification of the content of these paradigms does not, because he seeks to make the content specific to the subject matter of the natural sciences (see pp. 181-87).

For Holzner and Marx most professional and disciplinary groups are also epistemic communities. Such groups profess standards of verification

and an attachment to rules of behavior that they consider sufficient to assure the truth of their findings. They are also subject to personal and social constraints derived from the institutional pressures on their careers, which may result in deviations from stipulated norms of behavior in the production of knowledge. Robert Merton's four imperatives and the limits on their being observed provide the norms and counternorms making up the cultural standards and social arrangements in point.[22]

The four Mertonian imperatives are universalism (the results of research are equally valid irrespective of particularistic biases in the minds of the researchers), communism (the findings are in principle available to everybody), disinterestedness (fraud and dishonesty are minimized through institutional safeguards), and organized skepticism (every finding is in principle subject to being corrected or subsumed by way of a later finding, arrived at in competition among researchers). See Robert Merton, Social Theory and Social Structure (New York: Free Press, 1957), 550-60.

The truth tests such groups apply to the work of their members are a function of the basic beliefs to which members subscribe. Not only molecular biologists and meteorologists but also psychoanalysts, astrologers, and sociologists may constitute epistemic communities (or they may not).I accept this definition as far as it goes. It must be augmented, however, to suit the specific circumstances of learning in international organizations constrained by institutional habit. For me, an epistemic community is composed of professionals (usually recruited from several disciplines) who share a commitment to a common causal model and a common set of political values. They are united by a belief in the truth of their model and by a commitment to translate this truth into public policy, in the conviction that human welfare will be enhanced as a result.

Epistemic communities profess belief in extracommunity reality tests. They are, in principle, open to the constant reexamination of prevailing beliefs about cause and effect, ends and means. They are ready to see more complex cause-effect chains, or to simplify these in line with new knowledge. Yet, being human, they also resist reality tests likely to disturb their claims to novelty and relevance; epistemic communities exhibit both the Mertonian norms of science and its counternorms. Rival epistemic groups thus exhibit the same behaviors as do rival schools of scientists. The ultimate test of truth is the collective decision by the users of knowledge as to which claim is more successful in solving a problem agreed by all as requiring solution. It is a common property of epistemic communities that they accept this judgment as legitimate.

I have put emphasis on the procedural aspects of epistemic behavior, on how the members of an epistemic community defend what they think is true. These aspects involve the procedures of science, scientific methodology, and the sociological canons for judging its generality.

The substantive aspect of belief requires even more emphasis. Members of an epistemic community profess belief not merely in scientific procedures of verification (belief that may be almost instinctive and may hardly require explicit articulation); they are primarily and overtly concerned with claims of substantive knowledge about whatever issue or problem attracts them to public organizations and political decision makers. Their knowledge about communicable diseases, deep-sea mineral deposits, nuclear fusion, or exchange rate stability gives them their claim to be heard.

How are epistemic communities organized, and how do they fit into international organizations? Sometimes they take the form of "invisible colleges," networks of the like-minded not employed in the same university, laboratory, or think tank. Sometimes they also create organizations of their own, such as the Club of Rome, various organizations of economists, of environmentalists, and of public health specialists.[23]

These and additional examples of epistemic communities can be found in William M. Evan, ed., Knowledge and Power in a Global Society (Beverly Hills: Sage, 1981); and Diana Crane, Invisible Colleges (Chicago: University of Chicago Press, 1972). It bears emphasizing that not every nongovernmental organization (NGO) is also an epistemic community. An NGO merits the label only if its members subscribe to the reality- and truth-testing procedures mentioned. A commitment to a belief in a common cause-effect model, the consistent advocacy of the belief in conjunction with shared political values, and success in forging alliances with political coalitions are not enough. Thus, groups of lawyers advocating stronger international human rights policies usually do not qualify as epistemic communities. Nor do any number of other NGOs active in international organizations. For a discussion of NGOs that may or may not meet our criteria, see Peter Willets, ed., Pressure Groups in the Global Arena (London: Frances Pinter, 1982).

Such groups can influence international organizations if their members come to dominate standing expert advisory bodies or consistently serve as executors of programmatic decisions (as in economic development and public health projects). They may also penetrate organizations by acquiring a monopoly on staffing secretariat positions in their issue area. Thus, the development economists, political scientists, and engineers originally organized by Raul Prebisch in the U.N. Economic Commission for Latin America were an epistemic community that eventually managed to take over the U.N. Conference on Trade and Development (UNCTAD) secretariat. This can occur only when the epistemic community manages to forge an alliance with the dominant political coalition in an organization. It is equally possible, however, in the absence of such a successful coalition, that a given organizational unit is staffed by members of several rival epistemic communities. The success of an epistemic community thus depends on two features: (1) the claim to truth being advanced must be more persuasive to the dominant political decision makers than some other claim, and (2) a successful alliance must be made with the dominant political coalition.[24]The success of epistemic communities tends to be much more spectacular in the natural than in the social sciences. This is equally true at the national level. The hiatus is explained by some as due to the preference for uncertainty on the part of administrators, because by claiming uncertain knowledge they are able to escape the need to change programs and to side-step blame for failure. Moreover, epistemic communities advocating change on the basis of social science research tend to lack "brokers" who can consistently inform the political consumers of knowledge of relevant findings. In the absence of respected brokers, the policymaker tends to prefer the layperson's "social knowledge" to whatever arcana are advocated by social scientists. See Laurence E. Lynn, Jr., ed., Knowledge and Policy: The Uncertain Connection (Washington, D.C.: National Academy of Sciences, 1978).