Preferred Citation: Sheehan, James J., and Morton Sosna, editors The Boundaries of Humanity: Humans, Animals, Machines. Berkeley: University of California Press, c1991 1991. http://ark.cdlib.org/ark:/13030/ft338nb20q/

| The Boundaries of HumanityHumans, Animals, MachinesEdited by |

For Bliss Carnochan and Ian Watt, directors

extraordinaires, and the staff and friends of

the Stanford Humanities Center

Preferred Citation: Sheehan, James J., and Morton Sosna, editors The Boundaries of Humanity: Humans, Animals, Machines. Berkeley: University of California Press, c1991 1991. http://ark.cdlib.org/ark:/13030/ft338nb20q/

For Bliss Carnochan and Ian Watt, directors

extraordinaires, and the staff and friends of

the Stanford Humanities Center

ACKNOWLEDGMENTS

The editos would like to acknowledge some of those who made possible the 1987 Stanford University conference, "Humans, Animals, Machines: Boundaries and Projections," on which this volume is based. We are particularly grateful to Stanford's president, Donald Kennedy, and its provost, James Roose, for this support of the conference in connection with the university's centennial. We also wish to thank Ellis and Katherine Alden for their generous support.

Special thanks are owed the staff of the Stanford Humanities Center and its director, Bliss Carnochan, who generously assisted and otherwise encouraged our endeavors in every way possible. We are also indebted to James Gibbons, Dean of Stanford's School of Engineering, who committed both his time andthe Engineering School's resources to our efforts; Michael Ryan, Director of Library Collections, Stanford University Libraries, who, along with his staff, not only made the libraries' facilities available but arranged a handsome book exhibit, "Beasts, Machines, and other Humans: Some Images of Mankind"; and John Chowning, Center for Computer Research in Music and Acoustics, who orangized a computer music concert. Other Stanford University members of the conference planning committee to whom we are very grateful include James Adams, Program in Values, Technology, Science, and Society; William Durham, Department of Anthropology; and Thomas Heller, School of Law. Several members of the planning committee, John Dupré, Stuart Hampshire, and Terry Winograd, contributed to this volume.

Not all who participated in the conference could be included in the book. We wish, nonetheless, to thank Nicholas Barker of the British Library, Davydd Greenwood of Cornell University, Bruce Mazlish of the Massachusetts Institute of Technology, Langdon Winner of the Rens-

selaer Polytechnic Institute, and from Stanford, Joan Bresnan and Carl Degler, for their important contributions. Their views and observations greatly enriched the intellectual quality of the conference and helped focus our editorial concerns.

Finally, we wish to thank Elizabeth Knoll and others at the University of California Press for their steadfast support.

J.J. S.

M. S.

GENERAL INTRODUCTION

Morton Sosna

The essays in this volume grew out of a conference held at Stanford University in April 1987 under the auspices of the Stanford Humanities Center. The subject was "Humans, Animals, Machines: Boundaries and Projections."

The conference organizers had two goals. First, we wanted to address those recent developments in biological and computer research—namely, sociobiologya nd artificial intelligence—that are not normally seen as falling in the domain of the humanities but that have reopened important issues about human nature and identity. By asking what it means to be human, these relatively new areas of research raise the question that is at the heart of the humanistic tradition, one with a long history. We believed such a question could best be addressed in an interdisciplinary forum bringing together humanities scholars with researchers from sociobiology and artificial intelligence, who, despite their overlapping concerns, largely remain isolated from one another. Second, we wanted to link related but usually separate discourses about humans and animals, on the ond hand, and humans and machines, on the other. We wished to explore some of the parallels and differences in these respective debates and see if they can help us understand why, in some cases, highly specialized and even esoteric research programs in sociobiology or artificial intelligence can become overriding visions that carry large intellectual, social, and political implications. We recognized both that this is a daunting task and that some limits had to be placed on the material to be covered.

We have divided this volume into several sections. It opens with a general statement by philosopher Bernard Williams on the range of problems encountered in attempting to define humanity in relation either or animals or machines. This is followed by sections on humans and

animals and on humans and machines. These are separately introduced by James J. Sheehan, who provides historical background and commentary to the essays in each section while exploring connections between some of the issues raised by sociobiology and artificial intelligence. Sheehan further develops these connections in a concluding afterwood. Together, Sheehan's pieces underscore the extent to which sociobiology and artificial intelligence have reopened issues at the core of the Western intellectual tradition.

In assembling the contributors, we chose to emphasize the philosophical, historical, and psychological aspects of the problem as opposed to its literary, artistic, theological, and public policy dimensions. We sought sophisticated statements of the sociobiological and pro-artificial intelligence viewpoints and were fortunate to obtain overviews from two of the most active and influential researchers in these areas, Melvin Konner and Allen Newell. Konner probes the ways genetic research and studies of animal behavior have narrowed the gap between biological and cultural processes, and he raises questions about the interactions between genetic predispositions and complex social environments. Newell outlines some of his and other's work on artificial intelligence, arguing that the increasingly sophisticated quest for a "unified theory of mind" will, if successful, profoundly alter human knowledge and identity. Although neither Konner nor Newell claims to represent the diversity of opinion in the fields of sociobiology or artificial intelligence (as other essays in the volume make clear, considerable differences of opinion exist within these fields), each holds an identifiably "mainstream" position. The reader who wishes to know more about the specifies of sociobiology or artifical intelligence might wish to start with their essays.

Since the question of what it means to be human is above all philosophical, however, the volume begins with the reflections of Bernard Williams. In "Making Sense of Humanity," Williams criticizes some of the claims made in the names of sociobiology and artificial intelligence without denying their usefulness as research programs that have contributed to human understanding. He focuses on the problem of reductionism, that is, reducing a series of complex events to a single cause or to a very small number of simple causes. For Williams, both the appeal and shortcomings of sociobiology and artificial intelligence lie in their powerfully reductive theories that provide natural and mechanistic explanations for what William James once called "the blooming, buzzing confusion of it all." In making the case for human uniquences, Williams contends that, unlike the behavior of animals or machines, only human behavior is characterized by consciousness of past time, either historical or mythical, and by the capability of distinguishing the real from the representational, or, as he deftly puts it, distinguishing a rabbit from a picture of a

rabbit. Ane unlike animal ethologies, human ethology must take culture into account. According to Williams, neither "smart" genes nor "smart" machines affect such attributes of humanity as imagination, a sense of the past, or a search for transcendent meaning. These, he insists, can only be understood culturally.

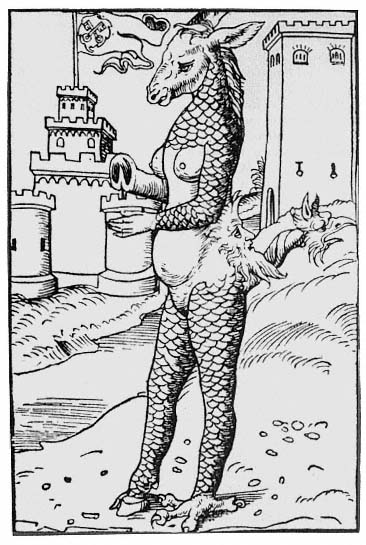

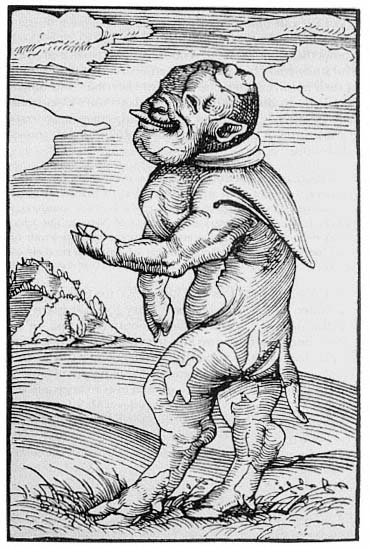

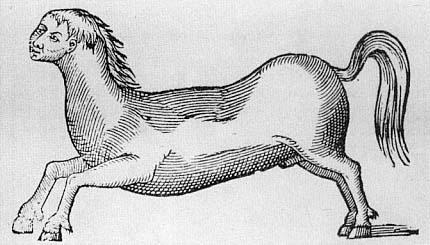

The section, Humans and Animals, focuses more directly on the relationship between biology and human culture. Arnold I. Davidson takes up some of the philosophical problems in a specific historical context, the century or so piror to the scientific revolution of the seventeenth century, when human identity stood firmly between the divine and natural orders. Davidson's "The Horror of Monsters" is a useful reminder that in earlier times, definitions of humanity were formed more by reference to angels than to animals, let alone machines. Since science as it emerged from medieval traditions was often indistinguishable from theology, the task of defining the human readily mixed the two discourses. Among other things, Davidson hows how the notion of monsters tested the long-standing belief in Western culture in the absolute distinction between humans and other animal forms in ways that prefigured some contemporary debates about sociobiology and artificial intelligence. His essay traces repeated attempt to reduce the human to a single a priori concept, to uncover linkages between moral and natural orders (or disorders), and to create allegories that legitimate a given culture's most cherished beliefs. Our own culture may find our predecessors' fascination with animal monsters amusingly misguided, but we continue to take more seriously—and are appropriately fasinated by—representations of monsters, from Dr. Frankenstein's to Robocop, that combine human intention with mechanical capacity.

In "The Animal Connection," Harriet Ritvo, a specialist in nineteenthcentury British culture, brings Davidson's discussion of marginal beasts as projections of human anxiety closer to the present. By examining the ideas of animal breeders in Victorian England, she shows that much of their thought owed more to pervasive class, racial, and gender attitudes than to biology. Unlike the theologically inspired interpreters of generation analyzed by Davidson, the upper- and middle-class breeders described by Ritvo did not hesitate to tinker with the natural order by "mixing seeds." What one age conceived as monstrous became to them a routine matter of improving the species and introducing new breeds. Still, projections from these breeders' understandings of human society so permeated their views of biological processes that they commonly violated an essential element of Victorian culture: faith in the absolute dichotomy between human beings and animals. For Victorians, this was no small matter. Shocked by Darwin's theories but as yet innocent of Freud's, many saw the open violation of the boundary between humans and beasts as a

sure recipe for disaster. In Robert Louis Stevenson's The Strange Case of Dr. Jekyll and Mr. Hyde , for example when the kindly Dr. Henry Jekyll realizes that his experiment in assuming the identity of the apelike and murderous Edward Hyde has gone terribly awry, he is horrified at both his own enjoyment of Hyde's depravity and at his inability to suppress the beast within himself, save by suicide.[1] But Victorian animal breeders who claimed they could distinguish "depraved" from "normal" sexual activities on the part of female dogs were, according to Ritvo, openly (if unselfconsciously) acknowledging this very "animal connection." Ritvo also observes that the greatest slippage—that is, the displacement of human moral judgments onto cows, dogs, sleep, goats, and cats—occurred precisely in those areas where contemporary understanding of the actual physiology of reproduction was weakest.

Human "slippage" under the guise of science, especially at the frontiers of knowledge, is Evelyn Fox Keller's main concern in "Language and Ideology in Evolutionary Theory: Reading Cultural Norms into Natural Law." Keller argues that the concept of competitive individualism on which so much evolutionary theory depends is not drawn from nature. Rather, like the rampant anthropomorphism described by Ritvo, it, too, is a projection of human social, political, and psychological values. Focusing on assumptions within the fields of population genetics and mathematical ecology, Keller questions whether individualism necessarily means competition, pointing out many instances in nature—not the least being sexual reproduction—where interacting organisms can more properly be said to be cooperating rather than competing. Yet, so deeply is the notion of competition embedded in these fields that Keller wonders whether such linguistic usage is symptomatic of a larger cultural problem, ideology passing as science, which makes evolutionary theory as much a prescriptive as a descriptive enterprise. For Keller, language and the way we use it, not to mention our reasons for using it as we do, limit our discussion of what nature is. Not opposed to a concept of human nature, as such, Keller objects to the ideologically charged terms on which such a concept often rests.

The problem of linguistic slippage permeates dicussion of both sociobiology and artificial intelligence. As a general rule, the greater the claims made by either of these disciplines, the greater is the potential for claims made by either of these disciplines, the greater is the potential for slippage. Like philosophical reductionism, linguistic slippage can simultaneously energize otherwise arcane scientific research projects, providing them with readily graspable concepts, while undermining them through oversimplification and distortion. In any case, sociobiology and artificial intelligence raise traditional questions about the relationship between language and science, between the observer and the observed, and be-

tween the subjects and the objects of knowledge. To one degree or another, all the essays in this volume confront this problem.

Is sociobiology merely the latest attempt to transfer strictly human preoccupations to a biological, and hence scientific, realm? Not according to Melvin Konner. In "Human Nature and Culture: Biology and the Residue of Uniqueness," Konner, an anthropologist and physician, makes the case for the sociobiological perspective. Drawing on recent genetic and primate research, he argues that categories like "the mental" or "the psychological," previously thought to be distinctively human cultural traits, are in fact shared by other species. As Konner sees it, this is all that sociobiology claims. Moreover, the acknowledges significant criticisms that undermined the credibility of earlier social Darwinists: their refusal to distinguish between an organism's survival and its reproduction, their inability to account for altruism in human and animal populations, or their misunderstanding of the exceedingly complex and still not fully understood relation between an organism and its environment. If left at that, apart from further reducing the already much narrowed gap separating humans from other animals, there would not be much fuss. But Konner also suggests that this inherited biological "residue," as he puts it, constitutes an essential "human nature." He then raises the question, If human nature does exist, what are the social implications? His answers range from the power of genes to determine our cognitive and emotional capacities to the assertion that, in human societies, conflict is inherent capacities to the assertion that, in human societies, conflict is inherent rather than some "transient aberration." If there is human uniqueness, according to Konner, it consists in our possessing the "intelligence of an advanced machine in the moral brain and body of an animal."

Given the diminished role of culture in such an analysis, sociobiology has aroused strong criticism. In his general reflections on the theme of biology and culture, philosopher John Dupré characterizes it as a flawed project that combines reductionism with conservative ideology. Davidson, Ritvo, and Keller, he notes, provide interesting case studies of how general theories can go growing. Dupré finds Konner's view of science inadequate, both in its faith in objectivity and in its epistemological certainty. Although not a cultural relativist in the classic sense, Dupré, much like Williams, would have us pay more attention to human cultures in all their variability as a better way of understanding human behavior than biological determinism grounded in evolutionary theory.

Among the strongest appeals of any explanatory theory is its appeal to mechanism. This is as true for Newton's physics, Adam Smith's theory of wealth, or Marx's theory of class conflict as for evolution or sociobiology. Know a little and, through mechanism (as if by magic), one can predict a

lot. This brings us to humanity's other alter ago, the machine. As with the boundary between humans and animals, the one between humans and machines not only has a history but has been equally influential in shaping human identity. In some ways, our relationship to machines has been more pressing and problematic. No one denics that human beings are animals or that animals, in some very important respects, resemble human beings. The question has always been what kind of animal, or how different from others, are we. But what does it mean if we are machines or, perhaps more disturbingly, if some machines are "like us"?

Roger Human begins the section, Humans and Machines, with several historical observations, which provide a useful context for considering current debates about the computer revolution and artificial intelligence. In "The Meaning of the Mechanistic Age," Human distinguishes between machines and the concept of mechanism as it come to be understood in seventeenth-century Europe. Machines, he notes, have been with us since antiquity (if not before), but prior to the Scientific Revolution, their creators rarely strove to make their workings visible. Indeed, as a way of demonstrating their own cleverness, they often deliberately hid or disguised the inner workings of their contrivances, much like magicians who keep their tricks secret. Early machines, in other words, did not offer themselves as blueprints for how the world worked. Nor did they principally operate as a means of harnessing and controlling natural forces for distinctively human purposes; more likely, they served as amusing or decorative curios. However, in the wake of the new astronomy, the new physics, and other discoveries emphasizing the universe as a wellordered mechanism, the machine, according to Hahn, became something quite different: a device that openly displayed its inner workings for others to understand. By calling attention to their mechanisms, often through detailed visual representations, machines came to symbolize a new age of scientific knowledge and material progress attainable through mechanical improvements. "The visual representation of the machine forever stripped them of secret recesses and hidden forces," writes Hahn. "The tone of the new science was to displace the occult by the visible, the mysterious by the palpable." To see was to know, and to know was to change the world, presumably for the better.

At best, machines have only partially fulfilled this hope, and we are long past the day when diagrams of gears and pulleys could alone guarantee their tangibility and utility. Yet the concept of mechanism—what it means and what it can do—continues to generate controversy. Biological and evolutionary theories, despite their mechanistic determinism, could still have us with minds, psyches, or souls. But with the advent of computers and artificial intelligence, even these attributes to humanity are in danger of giving way for good. The essays by Allen Newell, Terry

Winograd, and Sherry Turkle consider the implications of the computer revoluion.

In "Metaphors for Mind, Theories of Midn: Should the Humanities Mind?" Newell reminds us that the computer is a machine with a difference, "clearly not a rolling mill or a tick-tock clock." The computer threatens not only how we think about being human and the foundation of the humanities as traditionally conceived but all intellectual disciplines. Noting that a computational metaphor for "mind" is very common, Newell expresses dissatisfaction with such metaphorical thinking, indeed with all metaphorical thinking when it applies to science. For Newell, the better the metaphor, the worse the science. A scientific "theory of mind," however, if achieved (and Newell believes we are well on our way toward achieving one), would be quite another matter. He insists that, unlike the artificial rhetorical device of metaphor, theories formally organize knowledge in revealing and useful ways. In urging cognitive scientists to provide a unified theory of mind that can be represented as palpably as the workings of a clock, Newell exemplifies the epistemological spirit of the mechanistic age described by Hahn. He also believes that "good science" can and should avoid the kind of linguistic slippage that has characterized the debate about biology and culture.

As to what such a theory of mind (if correct) will mean for the humanities, Newell speculates that it will break down the dichotomy between humans and machines. Biological and technological processes will instead be viewed as analogous systems responding to given constraints and having, quite possibly, similar underlying features. At most, there will remain a narrower distinction between natural technologies, such as DNA, and artificial ones, such as computers, with both conceived as operating according to the same fundamental principles. Even elements frequently thought to be incommensurably human, such as "personality" or "insight," might be shown to be part of the same overall cognitive structure. And technology itself might finally come to be viewed as an essential part of our humanity, not an alien presence.

Newell's analysis treats artificial intelligence (AI) as an exciting research project, ambitious and potentially significant, yet still limited in its claims and applications. But critics have questioned whether AI has remained, or can or ought to remain, unmetaphorical. Is not, they ask, the concept of artificial intelligence itself a profoundly determining metaphor? As the editor of a special Daedalus issue on AI recently put it, "Had the term artifcial intelligence never been created, with an implication that a machine might be able to replicate the intelligence of a human brain, there would have been less incentive to create a research enterprise of truly mythic proportions."[2] Among other difficulties, a science without metaphor may be a science without patronage.

Terry Winograd, himself a computer scientist, is less sanguine about AI. In "Thinking Machines: Can There Be? Are We?" Winograd characterizes AI research as inextricably tied to its technological—and hence metaphorical—uses. Why seek a theoretical model of mind, he asks, unless we also desire to create "intelligent tools" that can serve human purposes? winograd is troubled by the slippage back and forth between these parts of the AI enterprise, which he feels comprises its integrity and leads to exaggerated expectations and overdetermined statements, such as Marvin Minsky's notorious assertion that the mind is nothing more than a "meat machine." The human mind, Winograd argues, is infinitely more complicated than mathematical logic would allow. Reviewing AI efforts of the past thirty years, Winograd finds that a basic philosophy of "patchwork rationalism" has guided the research. He compares the intelligence likely to emerge from such a program to rigid bureaucratic thinking where applying the appropriate rule can, all too frequently, leadto Kafkaesque results. "Seeers after the glitter of intelligence," he writes, "are misguided in trying to cast it in the base metal of computing." The notion of a thinking machine is at best fool's gold—a projection of ourselves onto the machine, which is then projected back as "us." Winograd urges researchers to regard computers as "language machines" rather than "thinking machines" and to consider the work of philosophers of language who have shown that, to work, human language ultimately depends on tacit understandings not susceptible to mechanistically determinable mathematical logic.

In "Romantic Reactions: Paradoxical Responses to the Computer Presence," social scientist Sherry Turkle provides a third perspective on AI. Where Hahn emphasizes the palpability of machines and their mechanisms as leading to "the age of reason," Turkle's empirical approach underscores a paradoxial reaction in the other direction. "Computers," she reminds us, "present a scintillating surface and exciting complex behavior but no window, as do things that have gears, pulleys, and levers, in their internal structure." Noting that romaniticsm was, at least in part, a reaction to the rationalism of the Enlightenment, Turkle raises the possibility that the very opacity of computer technology, along with the kind of disillusionment expressed by Winograd, might be leading us to romanticize the computer. This could, she suggests, lead to a more romantic rather than a more rationalistic view of people, because if we continue to define ourselves in the mirror of the machine, we will do so in contrast to computers as rule-processors and by analogy to computers as "opaque." These questions of defining the self in relation and in reaction to computers takes on new importance given current directions in AI research that focus on "emergent" rather than rule-driven intelligence.

By emphasizing the computer as a projection of our psychological

selves—complex, divided, and unpredictable as well are—Turkle speaks to Winograd's concern that computers cannot be made to think like humans by reversing the question. For her, the issue is not only whether computers will ever think like people but, as she puts it, "the extent to which people have always thought like computers." Turkle does not regard humans' inclination to define themselves in relation to machines or animals as pathological; rather, she views it as a normal expression of our own psychological uncertainties and of the machine's ambivalent nature, a marginal object poised between mind and not-mind. At the same time, in contrast to Newell, Turkle suggests that computers are as much metaphorical as they are mechanistic and that there are significant implications for non-rule-based theories of artificial intelligence in researchers' growing reliance on metaphors drawn from biology and psychology.

The section concludes with some reflections on the humans/animals/machines trichotomy by philosopher Stuart Hampshire. Philosophy, he confesses, often seems like "a prolonged conspiracy to avoid the rather obvious fact that humans have bodies and are biological beings," a view that allows sociobiology more legitimacy than Williams and Dupré would perhaps be willing to give it. But, as opposed to Turkle's notion of "romantic" machines, Hampshire goes on to make the point that, precisely because humans possess bioloigcally rooted mental imperfections and unpredictabilities, the more machines manage to imitate the workings of the often muddled human mind, the less human they become. Muddled humans, he notes, at times still perform inspired actions; muddled machines, however, are simply defective. Hampshire's thoughts, in any event, are delightfully human.

The essays in The Boundaries of Humanity consider the question, whether humanity can be said to have a nature and, if so, whether this nature (or natures) can be objectively described or symbolically reproduced. They also suggest that sociobiology and artificial intelligence, in all their technical sophistication, put may old questions in a new light.

PROLOGUE:

MAKING SENSE OF HUMANITY

Bernard Williams

Are we animals? Are we machines? Those two questions are often asked, but they are not satisfactory. For one thing, they do not, from all the relevant points of view, present alternatives: those who think that we are machines think that other animals are machines, too. In addition, the questions are too easily answere.d We are, straightforwardly, animals, but we are not, straightforwardly, machines. We are a distinctive kind of animal but not any distinctive kind of machine. We are a kind of animal in the same way that any other species is a kind of animal—we are, for instance, a kind of primate.

Ethology and Culture

Since we are a kind of animal, there are answers in our case to the question that can be asked about any animal, "How does it live?" Some of these answers are more or less the same for all human beings wherever and whenever they live, and of those universal answers, some are distinctively true of human beings and do not apply to other animals. There are other answers to the question, how human beings live, that vary strikingly from place to place and, still more significantly, from time to time. Some other species, too, display behavior that varies regionally—the calls of certain birds are an example—but the degree of such variation in human beings is of a quite different order of magnitude. Moreover, and more fundamentally, these variations essentially depend on the use of language and, associated with that, the nongenetic transmission of information between generations, features that are, of course, themselves among the most important universal characteristics distinstice of human beings. This variation in the ways that human beings live is cultural

variation, and it is an ethological fact that human beings live under culture (a fact represented in the ancient doctrine that their nature is to live by convention).

With human beings, if you specify the ethnological in detail, you are inevitably led to the cultural. For example, human beings typically live in dwellings. So, in a sense, do termites, but in the case of human beings, the description opens into a series of cultural specifications. Some human beings live in a dwelling made by themselves, some in one made by other human beings. Some who make dwellings are constrained to make them, others are rewarded for doing so; in either case, they act in groups with a division of labor, and so on. If one is to describe any of these activities adequately and so explain what these animals are up to, one has to ascribe to them the complex intentions involved in sharing a culture.

There are other dimensions of culture and further types of complex intention. Some of the dwellings systematically vary in form, being fourbedroom Victorians, for instance, or in the Palladian style, and those descriptions have to be used in explaining the variations. Such styles and traditons involve kinds of intentions that are not merely complex but self-referential: the intentions refer to the tradition, and at the same time, it is the existence of such intentions that constitutes the tradition. Traditions of this kind display another feature that they share with many other cultural phenomena: they imply a consciousness of past time, historical or mythical. This consciousness itself has become more reflexive and complex in th course of human development, above all, with the introduction of literacy. All human beings live under culture; many live with an idea of their collective past; some live with the idea of such an idea.

All of this is ethology, or an extension of ethnology; if one is going to understand a species that lives under culture, one has to understand its cultures. But it is not all biology. So how much is biology? And what does that question mean? I shall suggest a line of thought about similarities and differences.

The story so far implies thar some differences in the behavior of human groups are explained in terms of their different cultures and not in biological terms. This may encourage the idea that culture explains differences and biology explains similarities. But this is not necessarily so. Indeed, in more than one respect, the question is not well posed. First, there is the absolutely general point that a genetic influence will express itself in a particular way only granted a certain sort of environment. A striking example of such an interaction is provided by turtles' eggs, which if they are exposed to a temperature below 30 degrees Celsius at a certain point in development yield a female turtle but if to a higher temperature, a male one. Moreover, the possible interactions are

complex, and many cases cannot be characterized merely by adding together different influences or, again, just in terms of triggering.[1] Changes in the environment may depend on the activities of the animals themselves. In the case of human beings, the environment and changes in it may well require cultural description.

Granted these complexities, it may not be clear what is meant by ascribing some similarity or difference between different groups of human beings to a biological rather than a cultural influence. But insofar as it makes sense to say anything of this sort, it can be appropriate to ascribe a difference in human behavior to a biological factor. Thus, the notable differences in the fertility rates of human societies at different times (a phenomenon that defies simple explanation) may be connected to a differential perception of risk.[2] This would provide a strong analogy to differences in the reproductive behavior in groups of other species, and in this sense, it would suggest a biological explanation. But many features of the situation would demand cultural description, such as the reproductive behaviors so affected, the ways in which risks are appreciated, and, of course, what events counted as dangerous (e.g., war).

In the opposite direction, it has been a pervasive error of sociobiology to suppose that if some practice of human culuture is analogous to a pattern of behavior in other species, then it is all the more likely to be explained biologically if it is (more or less) universal among human beings. If this follows at all, it does so in a very weak sense. Suppose (what is untrue) that the subordinate role of women were a cultural universal. This might nevrtheless depend on other cultural univrsals and their conditions, for example, the absence up to now of certain kinds of technology; it could turn out to be biologically determined at most to this extent, that if roles related to gender were to be assigned in those cultural contexts, biology favored this assignation.

We cannot be in a position to give a biological explanation of any phenomenon that has a cultural dimension, however widespread the phenomenon is, unless we are also in a position to interpret it culturally. This is simply an application, to the very special case of human beings, of the general truth that one cannot explain animal behavior biologically (in particular, genetically) unless one understands it ethologically.

Cognitive Science and Folk Psychology

The claim that we are animals is straightforwardly true. The claim that we are machines, however, needs a determinate interpretation if it is to mean anything. What some people mean by it (despite the existence of machines capable of random behavior0 is merely that we, like other large things, can be deterministically characterized, to some acceptable ap-

proximation, in terms of physics. This seems to me probably true and certainly uninteresting for the present discussion. Any more interesting version must claim that we are each a machine; and I take the contemporary contnet of this to be that we are each best understood in terms of an informtion-processing device. This, in turn, represents a belief in a research program, that of psychology as cognitive science. However, the claim that human beings are in this sense machines involves more than the claim that human beings are such that cognitive science is a good progrma for psychology. it must also imply that psychology provides an adequate program for understanding human beings; this is a point I shall come back to.

To some extent, the claim that human beigns can be understood in terms of psychology as cognitive science must surely be an empirical one, to be tested in the success of the research program. For an empirical claim, however, it has attracted a surprising amount of a priori criticism, designedto show that the undertaking is mistaken in principle. Less extreme, obviously, than either the comprehensive research program or the comprehensive refutation of it is the modest suggestion that this kind of model will be valuable for understanding human beings in some respects but not others. The suggestion is initially attractive but at the same time very indeterminate, and, of course, it may turn out that, like some other compromises, it is attractive only because it is indeterminate. I should like to raise the question of how the compromise might be made more determinate.

Those who want to produce a comprehensive refutation of the program sometimes make the objection that only a living thing can have a psychology. This can mean two different things. One is that psychological processes, of whatever kind, could be realized only in a biological system, that a mind could only be secreted by a brain.[3] This, if true, would certainly put the research program out of business, since, whatever other refinements it receives, its central idea must be that psychological processes could in principle be realized by any system with an adequate information-theoretical capacity. However, I see no reason why in this form the objection should be true: that mind is essentially biochemical seems a no more appealing belief than that it is essentially immaterial.

A more interesting version of the objection that only a living thing can have a psychology takes the form of saying that a human psychology, at least, can be possessed only by a creature that has a life , where this implies, among other things, that its experience has a meaning for it and that features of its environment display salience, relevance, and so on, particularly in the light of what it sees as valuable. This seems to me much more likely to be true, and it has a discouraging consequence for

the research program in its more ambitious forms, because the experience described in these terms is so strongly holistic. An example is provided by complex emotions such as shame, contempt, or admiratin, where an agent's appreciation of the situation, and his or her selfreflection, are closely related to one another and also draw on an indefinitely wide range of other experiences, memories, social expectations, and so on. There is no reason to suppose that one could understand, still less reproduce, these expeiences in terms of any system that did not already embody the complex interconnections of an equally complex existence.[4]

Another example is worth mentioning particularly because it has so often appeared in the rhetoric of arguments about such questions. This is creativity. An information-processing device might be a creative problem solver, and it might come up with what it itself could "recognize" as fruitful solutions to problems in mathematics or chess 9wagers on this being impossible were always ill-advised). But it could not display a similar creativity in some less formalized intellectual domain or in the arts. This is not (repeat, not ) because such creativity is magical or a resplendent counterexample to the laws of nature.[5] It is simply that what we call creativity is a characteristic that yields not merely something new or unlikely but something new that strikes us as meaningful and interesting; and what makes it meaningful and interesting to us can lie in an indeterminately wide range of associations and connections built up in our existence, most of them unconscious. The associations are associations for us : the creative idea must strike a bell that we can hear. In the sense that a device can be programmed to be a problem solver, there may be, in these connectoins, no antecedent problem. (Diaghilev, asking Cocteau for a new ballet, memorably said, "Étonne-moi, Jean. ") None of this is to deny that there may be a description in physical terms of what goes on when a human being comes up with somethign new and interesitng. They difficulty for the research program is that there is no reason to expect that in these connections, at least, there will be an explanatory psychological account at the levle that it wants, lying between the physical account, on the one hand, and a fully interpretive account, which itself uses notions such as meaning, on the other.

The activities and experiences that i have mentioned as providing a difficulty for the research program are all specifically human. Although it may sometimes have been argued that some such holistic features must belong to any mentality at all, the most convincing account of the problem connects them with special features of human consciousness and culture. The question on these issues that i should like to leave for consideration is the foolowing: If we grant this much, what follows for activities and, particularly, abilities that human beings do prima facie

share with other creatures? If we grant what has just been suggested, there will not be an adequate cogntiive-scientific account of what it is to feel embarrassment, or of recognizing a scene as embarrassing, or (perhaps) of seeing that a picture is a Watteau. But there could be, and perhaps is, such an account of seeing that something is a cylinder or of recognizing something as a rabbit. Other animals have abilities that can be described in these terms, but are they just the same abilties in human beings and in other animals? (Consider, for instance, the relations, in the human case, between being able to recognize a rabbit and being able to recognize a rabbit in many different styles of picture.) What does seem clear, at the very least, is that a cognitive-scientific program for rabbit recogntion, and a human being skilled in recognizing rabbits, would not be disposed to make quite the same mistakes. What we shall need here, if this kind of research program is to help us in understanding human beings, is an effective notion of a fragment of human capacities. There is no reason to think that there cannot be such a notion, but we need more thoughts about how it may work.

the cognitive science research program and the hopes that may be reasonably entertained for it are a very different matter from the ambitions that some people entertain on the basis of a metaphysics of the cognitive science program, in particular, that t he concepts of such a science will eventually replace, in serious and literal discourse, those of "folk psychology." The main difficulty in assessing this idea is that the boundaries of "folk psychology: are vague. Sometimes it is taken to include such conceptions as Cartesian dualism or the idea that the mental must be immediately known. These conceptions are indeed unsound, but if you attend to what "the folk" regularly say and do rather than to what they may rehearse when asked theoretical questions, it is far from clear that they have these ocnceptions in their psychology. If they do, it does not need cognitive science to remove them, but, if anything, reflection, that is to say, philosophy.

In any case, the interesting claims do not concern such doctrines but rather the basic materials of folk psychology, notions such as belief, desire, intention, decisions, and action. These really are used by the folk in understanding their own and other people's psychology. These, too, the metaphysicans of cognitive science expect to be replaced, in educated thought, by other, scientific, conceptions.[6] In part, this stance may depend on confusing two different questions, whether a given concept belongs to a particular science and whether it can coexist with that science, as opposed to being eliminated by it. "Golf ball" is not a concept of dynamics, but this is quite consistent with the truth that among the things to which dynamics applies are golf balls.[7] It may be said here that the situation with concepts such as belief, desire, and intention is different,

because they, unlike the concept of a golf ball, have explanatory ambitions, and folk psychology, correspondingly, is in the same line of business as the theory, call it cognitive science, that will produce more developed explanations. But this is to presuppose that cognitive science does not need such concepts to characterize what it has to explain. No science can eliminate what has to exist if there is to be anything for it to explain: Newton's theory of gravitation could not show that there are no falling bodies or Marr's theory of vision, that there is no such thing as vision.

The metaphysicians perhaps assume that there is a neutral item that cognitive science and folk psychology are alike in the business of explaining, and that is behavior. But to suppose that there could be an adequate sense of "behavior" that did not already involve concepts of folk psychology—the idea of an intention, in particular—is to fall back into a basic error of behaviorism. Cognitive science rightly prides itself on having left the errors of behaviorism behind; but that should mean not merely that it has given up black box theorizing but, connectedly, that it recognizes that the kinds of phenomena to be explained cannot be brought together by purely nonpsychological criteria, as, for instance, classes of displacements of limbs.[8]

Humanity and the Human Sciences

The claim that we are machines was the claim, I said earlier, that we are each a machine, and this, as I paraphrased it, entailed the idea that psychology is adequate for the understanding of human beings. What I have particularly in mind here relates to the much-discussed idea that for most purposes, at least, of explaining what human beings do, we can adopt the posture of what is called methodological solipsism and think of these creatures, in principle, as each existing with its own mental setup, either alone or in relation to a physical environment not itself characterized in terms of any human science. This approach has been criticized in any case for reasons in the theory of meaning.[9] My concern here, however, is not with those issues but with the point that to make sense of the individual's psychology, it may well be necessary to describe the environment in terms that are the concern of other human sciences. It may be helpful, in this connection, to see how the false conception of methodological solipsism differs from another idea, which is correct. The correct idea is inoffensive to the point of triviality; I label it "formal individualism,"[10] and it states that there are ultimately no actions that are not the actions of individual agents. One may add to this the truth that the actions of an individual are explained in the first place by the psychology of that individual: in folk psychological terms, this means, for instance,

that to the extent those actions are intentional, they are explained in the first place by the individual's intentions.

The simple truths of formal individualism are enough to rule some things out. They imply, for instance, that if some structural force brings about results in society, it must do so in ways that issue in actions produced by the intentions of individuals, though those intentions, of course, will not adequately express or represent those forces. It also follows (unsurprisingly, I hope) that if Germany declared war, then some individuals did things that, in the particular circumstances, constituted this happening. But none of this requires that such things as Germany's declaring war are logically reducible to individual actions. (Germany embarked on war in 1914 and again in 1939, but the types of individual action that occurred were different. For one thing, Germany had different constitutions in these years.) Nor is it implied that the concepts that occur in individuals' intentions and in the descriptions of their actions can necessarily be reduced to individualist terms; in the course of Germany's declaring war on some occasion, someone no doubt acted in the capacity of chancellor , and there is no credible unpacking of that conception in purely individualist terms. Again, it is a matter not only of the content of agents' intentions but of their causes. Some of the intentions that agents have may well require explanation in irreducibly social terms. Thus, some intentions of the person who is German Chancell or will have to be explained in terms of his being German Chancellor.[11]

What is true is that each action is explained, in the first place, by an individual's psychology; what is not true is that the individual's psychology is entirely explained by psychology. There are human sciences other than psychology, and there is not the slightest reason to suppose that one can understand humanity without them.

How the human sciences are related to one another—indeed, what exactly the human sciences are—is a much-discussed question that I shall not try to take up. I hope that if we are able to take a correct approach to the whole issue, this will make it less alarming than some obviously find it to accept that the human sciences should essentially deploy notions of intention and meaning and that they should flow into and out of studies such as history, philosophy, literary criticism, and the history of art which are labeled "the humanities" and perhaps are not called "sciences" at all. If it is an ethological truth that human beings live under culture, and if that fact makes it intelligible that they should live with ideas of the past and with increasingly complex conceptions of the ideas that they themselves have, then it is no insult to the scientific spirit that a study of them should require an insight into those cultures, into their products, and into their real and imagined histories.

Some resistance to identifying the human sciences in such tersm—

"humanistic" terms, as we might say—comes, no doubt, simply from vulgar scientism and a refusal to accept the truth, at once powerful and limiting, that there is no physics but physics. But there are other reasons as well, to be found closer to the human sciences themselves. I have suggested so far that biology, here as elsewhere, requires ethology and that the ethology of the human involves the study of human cultures. Looking in a different direction, I have suggested that psychology as cognitive science, whatever place of its own it may turn out to have, should not have universalist and autonomous aspirations. But there is a different kind of challenge to the humane study of humanity, which comes from cultural studies themselves. I cannot in this context do much more than mention it, but it should be mentioned, since it raises real questions, sometimes takes the form of extravagantly deconstructive ambitions, and often elicits unhelpfully conservative defenses.

This challenge is directed to the role of the humanities now. It is based not on any scientific considerations, or on any general characteristics of human life, but on certain features of the modern—or perhaps, in one sense of that multipurpose expression, postmodern—world.

The claim is that this world is liberated from, or at least floating free from, the past, and that in this world, history is kitsch . Above all, it is a world in which particular cultural formations are of declining importance and are becoming objects of an interest that is merely nostalogic or concerned with the picturesque—that is to say, a commercial interest.

If applied to ur need for historical understanding, such a view is surely self-defeating, because the ideas of modernity and postmodernity are themselves historical categories; they embody an interpretation of the past. This would be true even if the conception of a general modernity completely transcending local and cultural variation were correct. But it remains to be seen whether that conception is even correct; there is no reason at the moment, as I understand the situation, to suppose that patterns of development are independent of history and culture; South Korea and Victorian England are by no means the same place. But however that may be, these are indisputably matters for historical and cultural understanding.

What is more problematic is our relation in the modern world to the literature and art of the past. Our historical interest in it—our interest in it as, for instance, evidence of the past—raises no special question, but the study of the humanities has always gone beyond this, in encouraging and informing an interest in certain works, picked out both by their quality and their relation to a particular tradition, as cultural objects for us, as formative of our experience. There is obviously a great deal to be said about this and about such phenomena as the interest shown by all developing countries in the canon of European painting and music.

Some of what needs to be said is obviously negative, and it is a real question whether certain famous artworks can survive—a few of them physically, all of them aesthetically—their international marketing. But the conversion of works of art into commodities is one thing, and their internationalization is another, even if the two things have up to now coincided, and we simply do not yet know, as it seems to me, what depth of experience of how much of the art of the past will be available to the human race if its cultural divergences are in fact diminished. However, it is worth recalling the number of cultural transitions that have already been effected by the works of the past in arriving even where they now are in our consciousness. What was there in classical antiquity itself, or in the complex history of its transmission and its influence, that might have led us to expect that as objects of serious study seven plays of Sophocles should now be alive and well and living in California?

"Humanity" is, of course, a name not merely for a species but for a quality, and it may be that the deepest contemporary reasons for distrusting a humanistic account of the human sciences are associated with a distrust of that quality, with despair for its prospects, or, quite often, with a hatred of it. Some legatees of the universalistic tendencies of the Enlightenment lack an interest in any specific cultural formation or other typically human expression and at the limit urge us to rise above the local preoccupations of "speciesism." Others, within the areas of human culture, have emphasized the role of structural forces to a point at which human beings disappear from the scene. There are other well-known tendencies with similar effects. But the more that cultural diversity within the human race declines, and the more the world as a whole is shaped by structures characteristic of modernity, the more we need not to forget but to remind ourselves what a human life is, has been, can be. This requires a proper understanding of the human sciences, and that requires us to take seriously humanity, in both senses of the term. It also helps us to do so.

PART ONE—

HUMANS AND ANIMALS

One—

Introduction

James J. Sheehan

In 1810, William Blake painted a picture that came to be known as Adam Naming the Beasts . Blake's portrait of the first man reminds us of a Byzantine icon of Christ: calm, massive, and immobile, Adam dominates the science. One of his arms is raised in an ancient gesture signifying speech, while around the other a serpent meekly coils. In the background, animals move in an orderly, peaceful file. Of course, we know the harmony depicted here will not last. Soon Adam and his progeny will lose their serence place in nature; no longer will they be comfortable in their sovereignty over animals or secure in the unquestioned power of their speech. Our knowledge of what is coming gives blake's picture is special, melancholy power.[1]

Since the Fall, man's place in nature has always been problematic. The problems begin with Genesis itself, where the story of creation is told twice. In the second chapter, the source of Blake's picture, God creates Adam and then all other living things, which are presented to man "to see what he would call them: and whatsoever Adam called every living creature, that was the name thereof," The first chapter, however, has a somewhat different version of these events. Here man is the last rather than the first creature to be made; while still superior to the rest by his special relationship to God, man nevertheless appears as part of a larger natural order. A similar ambiguity can be found in Chapter Nine, which begins with a divine promise to Noah that all beings will fear him but then goes on to describe a covenant between God and Noah and "every living creature of all flesh." From the very start of the Judeo-Christian tradition, therefore, humanity is at once set apart from, and joined with, the realm of other living things.[2]

In Greek cosmology, humanity's relationship to animals was yet more

uncertain. Like the Hebrews, the Greeks seemed eager to establish human hegemony over nature. Animals sacrifice, which was so central to Greek religion, ritually affirmed the distinctions between humans and beasts, just as it sought to establish connections between the human and divine. Aristotle, the first great biologist to speculate about human nature, developed an elaborate hierarchy of living beings, in which all creatures—beginning with human females—were defined on a sliding scale that began with adult males. But the line between humanity and its biological neighbors was more permeable for Greeks than for Hebrews. Gods frequently took on animals form, which allowed them to move about the world in disguise. As punishment, humans could be turned into beasts. Moreover, a figure like Heracles, who was human but with supernatural connections, expressed his association with animals by wearing skins on his body and a lion's head above his own. And if animal sacrifice set humans and animals apart, there were other rituals that seemed to blur the distinction between them. Dionysus's Maenads, for instance, lived wild and free, consumed raw flesh, and knew no sexual restraint. By becoming like beasts, the Maenads achieved a "divine delirium" and thus direct contact with the gods.[3]

Christians saw nothing godlike in acting like a beast. To them, the devil often appeared in animal form, a diabolic beast or, as Arnold Davidson points out, in some terrible mix of species. Bestiality, sexual transgression across the species barrier, was officially regarded as the worst sin against nature; it remained a capital crime in England until the second half of the nineteenth century. Humanity's proper relationship to animals was that of master; beasts existed to serve human needs. "Since beasts lack reason," Saint Augustine taught, "we need not concern ourselves with their sufferings," an opinion echoed by an Anglican bishop in the seventeenth century who declared, "We may put them [animals] to any kind of death that the necessity either of our food or physic will require." Even those who took a softer view of humanity's relationship with animals believed that our hegemony over the world reflected our special ties to the creator. "Man not only rules the animals by force," the Renaissance philosopher, Ficino, wrote, "he also governs, keeps and teaches them. Universal providence belongs to God, who is the universal cause. Hence man who generally provides for all things, both living and lifeless, is a kind of God."[4]

Although set apart from the rest of creation by their privileged relationship with God, many Christians felt a special kinship to animals. As Keith Thomas shows in his splendid study, Man and the Natural Workd, so close were the ties of people to the animals among whom they lived that often "domestic beasts were subsidiary members of the human community." No less important than these pressing sympathies of everyday inter-

dependence were the weight of cultural habit and the persistent power of half-forgotten beliefs. Until well into the eighteenth century, many Furopeans viewed the world anthropomorphically, imposing on animals human traits and emotions, holding them responsible for their "crimes," admiring them for their alleged expressions of pious sentiment. Although condemned by the orthodox and ridiculed by secular intellectuals, belief in the spirituality of animals persisted. As late as the 1770s, an English clergyman could write, "I firmly believe that beasts have souls; souls truly and properly so-called."[5]

By the end of the eighteenth century, such convictions were surely exceptional among educated men and women. The expansion of scientific knowledge since the Renaissance had helped to produce a view of the world in which there seemed to be little room for animal souls. The great classification schemes of the late seventeenth and eighteenth centuries encouraged rational, secular, and scientific conceptions of the natural order. As a result, the anthropomorphic attitudes that had invested animals—and even plants—with human characteristics gradually receded; nature was now seen as something apart from human affairs, a realm to be studied and mastered with the instruments of science. Here is Thomas's concise summary of this process:

In place of a natural world redolent with human analogy and symbolic meaning, and sensitive to man's behavior, they [the natural scientists] constructed a detached natural scene to be viewed and studied by the observer from the outside, as if by peering through a window, in the secure knowledge that the objects of contemplation inhabited a separate realm, offering no omens or signs, without human meaning or significance.[6]

Within this new world, humans' claims to hegemony were based on their own rational faculties rather than divine dispensation. Reason became the justification as well as the means of humanity's mastery. Because they lack reason, Descartes argued, animals were like machines, without souls, intelligence, or feeling. Animals do not act independently, "it is nature that acts in them according to the arrangement of their organs, just as we see how a clock, composed merely of wheels and springs, can reckon the hours." Rousseau agreed. Every animal, he wrote in A Discourse on Inequality, was "only an ingenious machine to which nature has given sense in order to keep itself in motion and protect itself." Humans are not in thrall to their instincts and senses; unlike beasts, when nature commands, humans need not obey. Free will, intellect, and above all, the command of language gives people the ability to choose, create, and communicate.[7]

Not every eighteenth-century thinker was sure that humanity's unquestioned uniqueness had survived the secularization of the natural

order. Lord Bolingbroke, for example, still regarded man as "the principal inhabitant of this planet" but cautioned against making too much of humanity's special status.

Man is connected by his nature, and therefore, by the design of the Author of all Nature, with the whole tribe of animals, and so closely with some of them, that the distance between his intellectual faculties and theirs, which constitutes as really, though not so sensibly as figure, the difference of species, appears, in many instances, small, and would probably appear still less, if we had the means of knowing their motives, as we have of observing their actions.

When Alexander Pope put these ideas into verse, he added that the sin of pride that brought Adam's fall came not from his approaching too close to God but rather from drawing too far away from other living things:

Pride then was not, nor arts that pride to aid;

Man walk'd with beast, joint tenants of the shade;

And it was Pope who best expressed the lingering anxiety that must attend humanity's position between gods and beasts:

Plac'd in this isthmus of a middle state,

A being darkly wise and rudely great,

With too much knowledge for the sceptic side,

With too much weakness for the stoic pride,

He hangs between; in doubt to act or rest;

In doubt to deem himself a good or beast;

In doubt his Mind or Body to prefer;

Born but to die, and reas'ning but or err. . . .

Chaos of Thought and Passion all confus'd,

Still by himself abus'd, or disabus'd;

Created half to rise, and half to fall,

Great lord of all things, yet a prey to all;

Sole judge of Truth, in endless error hurl'd;

The glory, jest, and riddle of the world.[8]

In the second half of the eighteenth century, what Pope had once called the "vast chain of being" began to be seen as dynamic rather than static, organic rather than mechanistic. The natural order now seemed to be the product of a sosmic evolution that, as Kant wrote in 1755, "is never finished or complete." This meant that everything in the universe—from species of plants and animals to the structure of distant galaxies and the earth's surface—was produced by and subject to powerful forces of change. By the early nineteenth century, scientists had

begun to examine this evolutonary process in a systematic fashion. In 1809, Jean Baptiste de Lamarck published Philosophie zoologique, which set forth a complex theory to explain the transformation of species over time. Charles Lyell, whose Principles of Geology began to appear in 1830, doubted biological evolution but offered a compelling account of the earth's changing character through the long corridors of geologic time. Thus was the stage set for the arrival of Darwin's Origin of Species, by far the most famous and influential of all renditions of "temporalized chains of being." With this book, Darwin provides the basis for our view of the natural order and thus links eighteenth-century cosmology and twentieth-century evolutionary biology.[9]

Even before Darwin formulated his theory of natural selection, he seems to have sensed this his scientific observations would have powerful implications for the relationship between humans and animals. In a notebook entry of 1837, Darwin first sketched what would beocme a central theme in his life's work:

If we choose to let conjecture run wild, then animals, our fellow brethren in pain, diseases, death, suffering and famine—our slaves in the most laborious works, our companions in our amusements—they may partake [of] our origins in one common ancestor—we may be all netted together.

When he published Origin of Species twenty-two years later, he approached the matter delicately and indirectly: "it does not seem incredible" that animals and plants developed from lower forms, "and if we admit this, we must likewise admit that all the organic beings which have ever lived on this earth may be descended from some one primordial form." In any event, he promised that in future research inspired by the theory of natural selection, "much light will be thrown on the origin of man and his history."[10]

The meaning of Darwinism for human history quickly moved to the center of the controversies that followed the publication of Origin . In 1863, T. H. Huxley, for example, produced Man's Place in Nature . Darwin himself turned to the evoluton of humanity in The Descent of Man, where he set out to demonstrate that "there is no fundamental difference between man and the higher mammals in their mental faculties." Each of those characteristics once thought to be uniquely human turn out to be shared by higher animals, albeit in lesser degrees: animals cannot speak, but they do communicate; they are intellectually inferior to humans, but they "possess some power of reasoning"; they cannot work as we do, but they can even use tools in a rudimentary way. Darwin's discussion of animal self-consciousness is worth quoting at length, not simply because it conveys the flavor of his argument but also because it illustrates the

ease with which he slipped from talking about differences between humans and animals to describing differences between "races" of men.

It may be freely admitted that no animal is self-conscious, if by this term it is implied that he reflects on such points, as whence he comes or whither he will go, or what is life and death, and so forth. But how can we feel sure that an old dog with excellent memory and some power of imagination, as shewn by his dreams, never reflects on his past pleasures and pains in the chase? And this would be a form of self-consciousness. On the other hand, as Büchner has remarked, how little can the hard-worked wife of a degraded Australian savage, who uses very few abstract words, and cannot count above four, exert her self-consciousness, or reflect on the nature of her own existence.

Darwin carried on his examination of humans and animals in The Expression of the Emotions in Man and Animals. Here he seeks to demonstrate that basic emotions have common origins and a biological base by pointing out the similarity of emotional expressions among human societies and between humans and animals. Like reason, language, and consciousness, emotions such as love, fear, and shame are not the sole and undisputed property of humanity.[11]

The research program suggested in this work on human and animal emotions was not immediately taken up by Darwin's many disciples. There were, to be sure, many who sought to apply Darwinism to human society, but they usually did so without a systematic examination of the resemblances between human and animal behavior. To social scientists influenced by Darwin, what mattered was less that people were animals than that they were still—in Harriet Ritvo's phrase—"the top animals," separated from the rest by what Dawwin himself had called man's "noble qualities" and "godlike intellect." Most of the natural scientists who followed Darwin turned in the opposite direction, away from humans toward other species with longer evolutionary histories and more accessible biological structures. As a result, empirical work on the connection between human and animal behavior, so central to Darwin's work on emotions, did not become an important part of his legacy until the second half of the twentieth century.[12]

The direct heirs of Darwin's research on human and animal emotion were scientists who studied behavioral biology, the discipline that would come to be called ethology. Although important research on ethology had been conducted during the 1920s and 1930s, the subject did not become prominent until the 1960s; in 1973, three leading ethologists shared the Nobel Prize. The new interest in ethology encouraged the publication of a variety of works of quite uneven quality, but all shared the conviction that studying the similarity of behavior existing across

animal species could yield important insights into the character of human individuals and groups. For example, Konrad Lorenz, a pioneer in the field and one of the Nobel laureates, maintained that, in both man and animals, aggressive behavior was instinctual. "Like the triumph ceremony of the greylag goose, militant enthusiasm in man is a true autonomous instinct: It has its own releasing mechanisms, and like the sexual urge or any other strong instinct, it engenders a specific feeling of intense satisfaction." Such deeply rooted instincts, ethnologists warned, will inevitably pose problems for, and set limits on, humans' ability to control themselves and their societies.[13]

By the 1970s, popular and scientific interest in ethnology had become enmeshed with a larger and more ambitious set of ideas and research enterprises conventinally called sociobiology. Edward O. Wilson, perhaps sociobiology's most vigorous exponent, describes it as the "scientific study of the biological basis of all forms of social behavior in all kinds of organisms, including man." Wilson began to define his view of the field in the concluding chapter of The Insect Societies (1971), which called for the application of his work on the population biology and zoology of insects to vertebrate animals. Four years later, he concluded Sociobiology: The New Synthesis with a chapter entitled "Man: From Sociobiology to sociology." This was followed in 1978 with the more popularly written Oh Human Nature, which examined what Wilson regarded as "four of the elemental categories of behavior, aggression, sex, altruism, and religion," from a sociobiological perspective. Wilson brought an ethnologist's broad knowledge of animal behavior to these subjects but added his own growing concern for the genetic basis of instincts and adaptations. This genetic dimension has become increasingly important in Wilson's most recent work.[14]

Sociobiology in general and Wilson in particular have been the subject of intense attacks from a variety of directions. In the course of these controversies, the term has tended to become a catchall for a variety of different developments in behavioral biology. Like many other controversial movements, sociobiology often seems more solid and coherent to its opponents, who can easily define what they oppose, than to its advocates, who have some trouble agreeing on what they have in common. It is worth noting, for example, that Melvin Konner, while sympathetic to Wilson in many ways, explicitly denies tht his own work, The Tangled Wing (1982), is sociobiology. But what Konner and the sociobiologists do share is the belief that most studies of human beings have been too "anthropocentric." If, as Wilson and others claim, "homo sapiens is a conventional animal species," then there is much to be learned by viewing the human experience as part of a broader biological continuum. Doing so will help us to understand what Darwin, in the dark passage at

the end of The Descent of Man, referred to as "the indelible stamp of his lowly origin" which man still carries in his body and what Konner, in a contemporary version of the same argument, calls the "biological constraints on the human spirit."[15]

We will return to some of the questions raised by sociobiology in the conclusion to this volume. For the moment, it is enough to point out that the conflicts surrounding it—illustrated by the works of Konner and Dupré in the following section—are new versions of ancient controversies. These controversies, while informed by our expandng knowledge of the natural world and expressed in the idiom of our scientific culture, have at thier core our persistent need to define what it means to be human, a need that leads us, just as it led the authors of Genexis, to confront our kinship with and differences from animals.

Two—

The Horror of Monsters*

Arnold I. Davidson

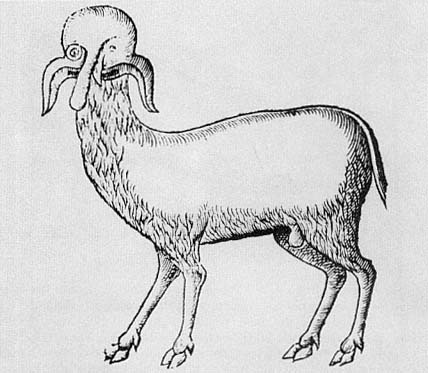

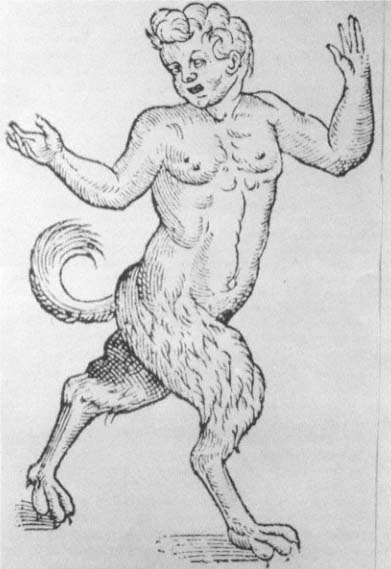

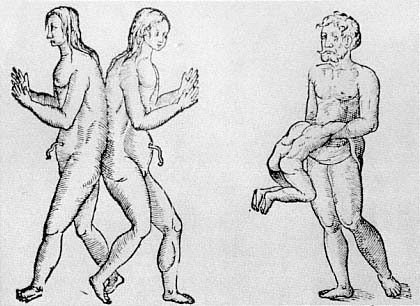

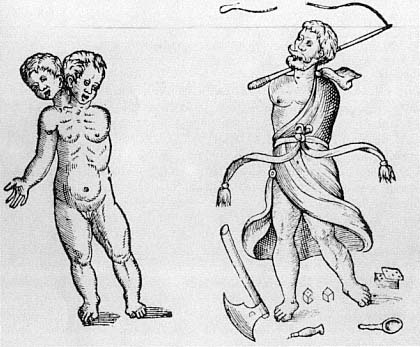

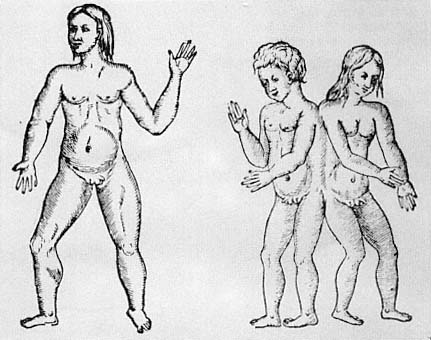

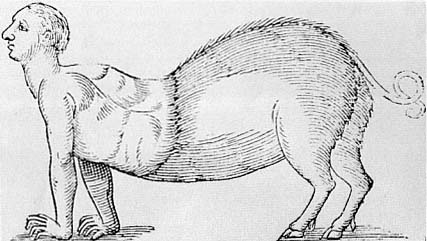

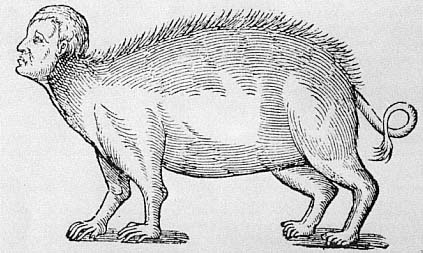

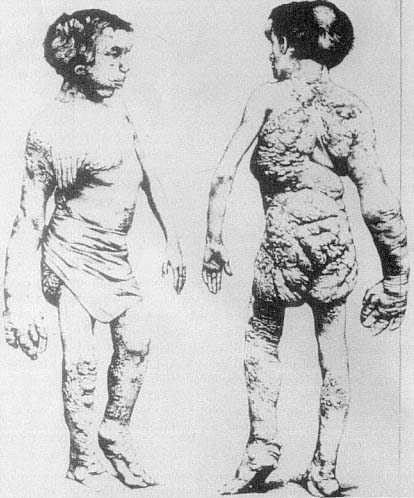

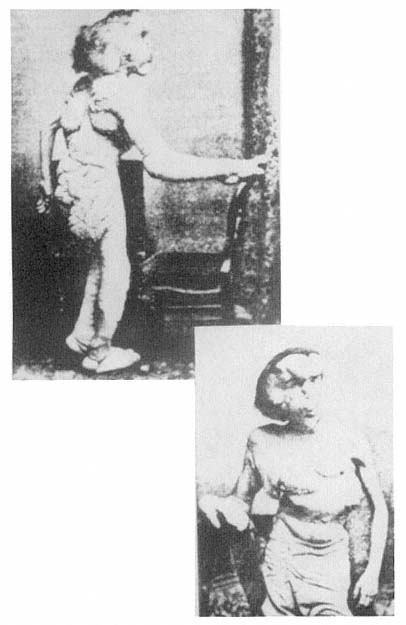

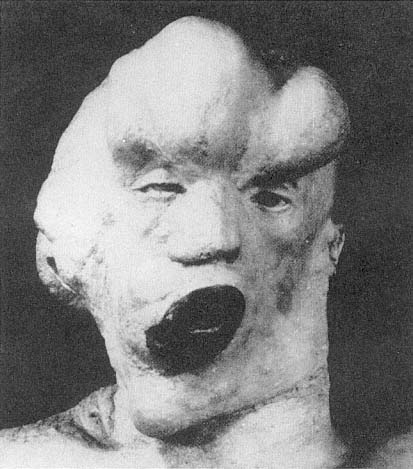

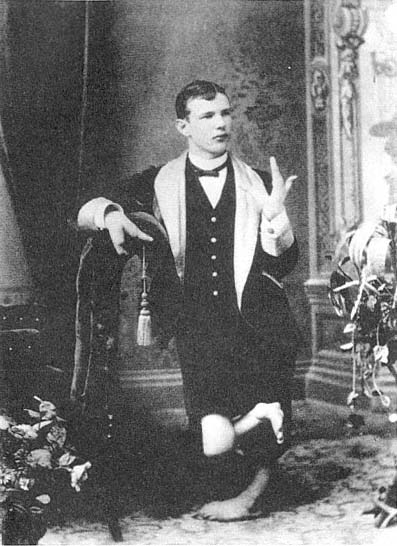

As late as 1941, Lucien Febvre, the great French historian, could complain that there was no history of love, pity, cruelty, or joy. He called for "a vast collective investigation to be opened on the fundamental sentiments of man and the forms they take."[1] Although Febvre did not explicitly invoke horror among the sentiments to be investigated, a history of horror can, as I hope to show, function as an irreducible resource in uncovering our forms of subjectivity.[2] Moreover, when horror is coupled to monsters, we have the opportunity to study systems of thought that are concerned with the relation between the orders of morality and of nature. I will concentrate here on those monsters that seem to call into question, to problematize, the boundary between humans and other animals. In some historical periods, it was precisely this boundary that, under certain specific conditions that I shall describe, operated as one major locus of the experience of horror. Our horror at certain kinds of monsters reflects back to us a horror at, or of, humanity, so that our horror of monsters can provide both a history of human will and subjectivity and ahistory of scientific classifications.

The history of horror, like the history of other emotions, raises extraordinarily difficult philosophical issues. When Febvre's call was answered, mainly by his French colleagues who practiced the so-called history of mentalities, historians quickly recognized that a host of historiographical and methodological problems would have to be faced. No one has faced these problems more directly, and with more profound results, than Jean Delumeau in his monumental two-volume history of fear.[3] But these are issues to which we must continually return. What will be required to write the history of an emotion, a form of sensibility, or type of affectivity, Any such history would require an investigation of

gestures, images, attitudes, beliefs, language, values, and concepts. Furthermore, the problem quickly arose as to how one should understand the relationship between elite and popular culture, how, for example, the concepts and language of an elite would come to be appropriated and transformed by a collective mentality.[4] This problem is especially acute for the horror of monsters, since so many of the concepts I discuss which are ncessary to out understanding of monsters come from high culture—scientific, philosophical, and theological texts. To what extent is the experience of horror, when expressed in a collective mentality, given from by these concepts? Without even attempting to answer these questions here, I want to insist that a history of horror, at both the level of elite concepts and collective mentality, must emphasize the fundamental role of description. We must describe, in much more detail than is usually done, the concepts, attitudes, and values required by and manifested in the reaction of horror. And it is not enough to describe these components piecemeal; we must attempt to retrieve their coherence, to situate them in the structures of which they are a part.[5] At the level of concepts, this demand requires that we reconstruct the rules that govern the relationships between concepts; thus, we will be able to discern the highly structured, rule-governed conceptual spaces that are overlooked if concepts are examined only one at a time.[6] At the level of mentality, we are required to place each attitude, belief, and emotion in the context of the specific collective consciousness of which it forms part.[7] At both levels, we will have to go beyond what is said or expressed in order to recover the conceptual spaces and mental equipment without which the historical texts will lose their real significance.